What is a graduate outcome?

This blog was contributed by Tom Fryer, a PhD researcher at University of Manchester. Tom has written for HEPI before about comprehensive universities. You can find Tom on Twitter @TomFryer4

There is a lot to like about Tuesday’s HEPI blog from Johnny Rich and Stella Fowler on the contribution of engineering courses to social mobility, part of the Engineering Professors’ Council’s wider report Engineering Opportunity written along with Charlotte Bailey. Particularly important is the call for a more radical approach to contextual admissions that considers unequal opportunities to access qualifications such as A Level Maths, as well as unequal opportunities to attain certain grades. Similarly, the authors offer a compelling critique of naïve uses of metrics — comparing university courses on the basis graduate employment rates is a ‘poor proxy measure…[of] what constitutes a good university course’.

While there is a lot to like, there is also an opportunity to clarify some of the discussion around graduate employment/salary metrics and what they tell us about higher education quality or value. The authors argue that these metrics are poor proxies of higher education quality, but I think we need to go a step further — these metrics are not a poor proxy, they are not even a measure of higher education quality or value. The metrics do not need tweaking to make them better, they need to be rejected as a square peg in a round hole.

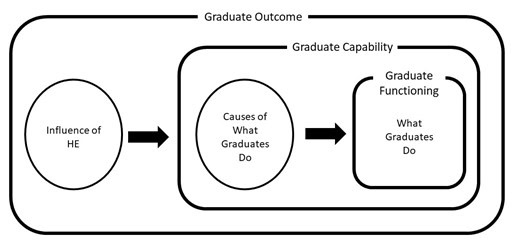

To make this case, I need to quickly introduce and distinguish three concepts, adapted from my recent paper in Higher Education Policy (an open-access author manuscript is available here).

- Graduate functionings – what graduates do.

- Graduate capabilities – the causes of what graduates do.

- Graduate outcomes – the influence of higher education, on the causes of what graduates do.

Let me illustrate this through the example of graduate employment. Graduate functionings are descriptions of whether graduates are working or not and the type of work they are doing —these are things that graduates do in the world. The causes that underlie these patterns of graduate employment, say the skills that make someone employable or the social capital that enables them to get a job, are graduate capabilities. These graduate capabilities may themselves be influenced by some aspects of higher education, say the impact of a particular pedagogy or extra-curricular activity. The influence of higher education on these graduate capabilities is a graduate outcome. Distinguishing these three concepts (graduate functionings, capabilities and outcomes) help us to avoid conflating:

- the things graduates do;

- the causes of the things graduates do; and

- the influence of higher education on the causes of the things graduates do.

There is an important difference between graduate functionings on the one hand, and graduate capabilities and outcomes on the other — while the latter two consider causes, a graduate functioning makes no causal claims and instead offers only an empirical description of the world. To say X% of graduates are employed, makes no reference to what causes this employment rate. It is possible some aspects of higher education influences employment, but it is also perfectly possible that graduate employment rates are influenced mainly by non-higher education factors, such as family networks and connections.

This difference between descriptive graduate functionings and causal graduate capabilities and outcomes has important consequences. Because graduate functionings are descriptions of the world, we can go out and measure them in a relatively simple way. However, graduate capabilities and outcomes involve causes, and so can only be assessed through nuanced research rather than simple empirical observation. To make a claim about a graduate outcome is to offer a causal explanation of how some aspect of higher education (e.g. engineering curricula) tends to promote a particular graduate capability (e.g. social capital), which then in turn leads to a particular graduate functioning (e.g. high skilled employment). More than this, we also need to understand how these causal processes vary for different students in different institutions. This is clearly no simple task.

When we talk about the value or quality of higher education, most often we are making a causal claim about the impact of higher education teaching on students. To say one university course has more value than another, is to make a causal claim about the impact of courses on students. But if we want to make a causal claim, it makes no sense to rely on non-causal graduate functionings such as graduate employment rates — these are simple descriptions of the world and could be caused by a huge range of different higher education and non-higher education factors. Instead, if we want to talk about the value or quality of a degree, we need to assess graduate outcomes and the complex ways in which they contribute to particular graduate functionings.

In terms of the Engineering Opportunity report, it would be fascinating to learn more about the causes that underlie the descriptive trends on employment rates and salaries that are detailed. This would be research that looks at the ways engineering education promotes certain capabilities, and how these in turn lead to graduate employment or other valuable functionings, as well as considering how this varies between different students, courses and institutions. Is it something about engineering education that causes high graduate employment rates and salaries, and if so how does this operate? Or, is it symbolic value placed on engineering degrees by employers or structural inequalities in the labour market that leads to these outcomes? It is only by unpacking the causal processes underneath the graduate employment data that we can begin to understand the impact of engineering courses on students.

This entails that we should have more research exploring the causal processes that underlie graduate functionings. But this also means that policymakers should abandon the use of graduate employment data to measure higher education value and quality as this misrecognises graduate employment as a causal graduate outcome rather than a descriptive graduate functioning. If we embrace the complex assessment of graduate outcomes, we will have to abandon attempts for simple comparisons between universities, but in return we will gain a more accurate picture of the actual transformations promoted by higher education.

Comments

Peter McIlveen says:

Thanks. This blog addresses a serious problem.

Research by my team found that Australia’s graduate outcome survey’s measures of satisfaction etc have little relation with employment outcomes, yet universities happily promote their reputations bu using ratings by graduates. Curious.

https://link.springer.com/article/10.1007/s10775-021-09477-0

Peter

Reply

Add comment