Dennis Sherwood: The broken school exam system needs an upgrade

The consequences from this year’s Great Grading Disaster continue to rumble on. Many students still feel damaged as a result of the ‘internal moderation’ exercised by their schools before their ‘centre assessment grades’ were submitted, hence the petition that, although rejected by the Department for Education (DfE), will be debated in Parliament on Monday 12 October. That debate might also encompass the significantly increasing pressure to reform the much-broken school exam system, pressure being exerted by, for example, the ‘One Nation’ group of Tory MPs who are campaigning to discard GCSEs, and the broadly-based ‘Rethinking Assessment’ community who too have GCSE in their sights, citing the unreliability of exam grades as one of the key drivers of change.

Scotland has already taken that idea on board, at least in the short term, having announced that the summer 2021 National 5 exams (roughly equivalent to GCSE) will not be held, with grades being determined by teacher assessment and course-work. Meanwhile, at the time of writing, Ofqual and the DfE continue, as ever, to defend the status quo, confirming that GCSE, AS and A level exams will take place, but possibly a few weeks after their originally-scheduled times.

My personal view is that, over the coming months, different schools will suffer different degrees of virus-induced disruption, so ploughing up any educational level playing-field that might have existed – if it ever did. So my ‘Plan A’ would be to decide, now, to award grades for all exams based on teacher assessment, but done properly this time, as suggested, for example, as the second alternative here, so that students, teachers, parents, carers, employers, universities and colleges all know what to expect. And in my contingency drawer, I would have some exam papers, so ‘Plan B’ would be to hold exams only if everyone agrees, sometime around Easter 2021, that two conditions are satisfactorily fulfilled:

- The exams must be fair, in that all students must have had an equal opportunity for learning.

- The resulting grades must be fully trustworthy and reliable, in contrast to the current position, as described so eloquently, but obliquely, in the recent statement by Dame Glenys Stacey, Ofqual’s Interim Chief Regulator, that exam grades ‘are reliable to one grade either way’.

Indeed, the unreliable grade problem is back to bite all those currently taking the autumn 2020 AS and A level exams – the 22,020 students who, presumably, were disappointed in the grades they ended up with after this summer’s chaos, and hoping for something better.

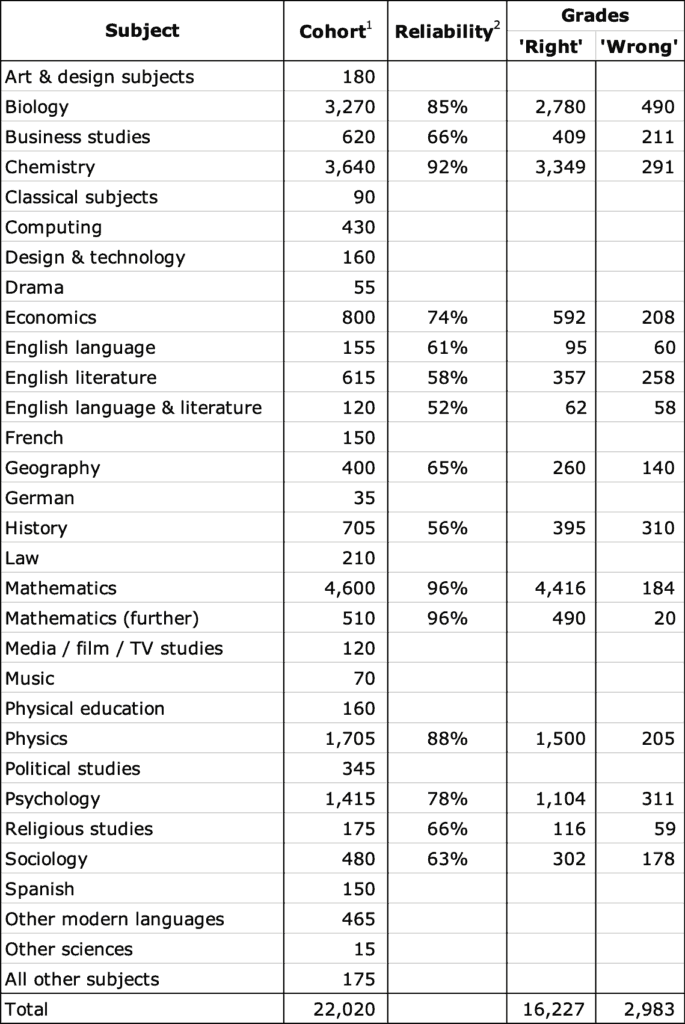

The following table shows the number of candidates sitting each subject (column 2), and the corresponding measure, if known, for that subject’s average grade reliability (column 3), as shown in Table 12 on page 21 of Ofqual’s November 2018 report, Marking consistency metrics – An update:

Candidates for autumn 2020 AS and A level exams

2 Figure 12, page 21 https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/759207/Marking_consistency_metrics_-_an_update_-_FINAL64492.pdf

Column 4 applies the reliability percentage (if known) to the subject cohort to estimate the likely number of ‘right’ grades; column 5, the estimate of the number of ‘wrong’ grades, is the difference between the subject cohort, and the number of ‘right’ grades.

Of the 19,210 candidates taking a subject for which the grade reliability is known, this analysis suggests that some 16,227 will be awarded the ‘right’ grade and 2,983, the ‘wrong’ one. That leaves a further 2,810 candidates taking subjects for which the reliability is unknown. As I have discussed elsewhere, the overall average reliability of exam grades is about 75%, so using that figure suggests a further 2,107 ‘right’ grades and 703 ‘wrong’ ones.

Accordingly, for the whole cohort of 22,020 candidates, the number of ‘right’ grades likely to be awarded is 18,334 (say, around 18,000), and the number of ‘wrong’ grades, 3,686 (say, around 3,500). The average grade reliability for this cohort is therefore about 18,334/22,020 = 83%, rather better than the overall average reliability of 75% – that’s because this autumn’s cohort contains a much greater proportion of the more reliable science subjects as compared to a ‘normal’ summer A level cohort.

There are two other important differences too. Typically, more than 750,000 students take AS and A levels, so this autumn’s cohort of 22,020 is very much smaller; secondly, those choosing to take this autumn’s exams are likely to be seeking up-grades as compared to their awards this summer. So no student who already has an A* will be sitting, but there are probably many with an A who hope for an A*, or a B hoping for an A. That suggests a clustering of the cohort around grade boundaries in general, and the B/A and A/A* boundaries in particular.

That gives Ofqual a headache. The basic assumptions that they have used to determine grade boundaries in the past – that the cohorts are large, and that there is a spread of marks across all abilities – both fail. That undermines the policy of ‘comparative outcomes’, for there is no suitable comparison. Furthermore, it challenges the concept of ‘norm referencing’, whereby grades are determined by reference to the performance of other candidates, with a given percentage (more or less) of the cohort being awarded each grade across the whole grade scale. But any move towards ‘criterion referencing’, in which each candidate is awarded the grade they are deemed to merit, even if all candidates are awarded A*s, not only cuts across years of policy, but also raises the spectre that different standards might be used by the different exam boards – suppose, for example, that [this board’s] Physics exam is ‘easier’ than [that one’s]? This possibility is a consequence of the decision for there to be a ‘competitive market’ in school exams, and is, to my mind, another reason for reform: if anyone can identify on what grounds the current exam boards ‘compete’, and why any such competition is a ‘good thing’, please post a comment!

Even if Ofqual can solve the problems of drawing the grade boundaries in fair places, and ensuring consistency of standards across the exam boards, the problem of the fundamental unreliability of grades remains. Perhaps Ofqual will pull something out of their hat – for example, to use only senior examiners for marking the scripts, or to review, very carefully, all scripts whose marks are at, or very close to, a grade boundary, as might be possible for this autumn’s smaller cohorts.

But perhaps not. In which case, maybe as many as 3,500 students will be ‘awarded’ the wrong grade when the results are announced on Thursday, 17 December. But they won’t know, for they will have no ‘second opinion’; nor will they be able to appeal. And that estimate of 3,500 could well be wrong too, for it is based on Ofqual’s measurements of grade reliability derived from ‘normal’ cohorts. But since Ofqual don’t routinely declare the reliability of the grades awarded, and are most unlikely to do so this December, no one will know what the right number of wrong grades is.

The unreliability of grades – now acknowledged by Ofqual – is a scandal. And to me, this problem must be fixed before any exams are re-instated. Perhaps this too will feature in the parliamentary debate scheduled for next Monday, 12 October.

Comments

Jeremy says:

> the 22,020 students who, presumably, were disappointed in the grades they ended up with after this summer’s chaos

It’s likely that a significant proportion of the 22,020 are taking exams in the autumn because they did not receive any grades at all in the summer.

Reply

Dennis Sherwood says:

Jeremy – thank you, you are absolutely right. Much appreciated.

Reply

Huy Duong says:

Dear Dennis,

At Matthew Arnold School in Oxford, in the January 2020 mocks, which were taken under JCQ rules, 14 students achieved A* in A-level maths and were given A* in their Spring report. When it came to calculating the CAGs, as far as I understand, the school used cohort-wide statistics such as prior attainment and transition matrix, which gave a grade distribution with 8 A*s. Therefore, of the above 14 students, 8 were given A* CAG and 8 were given A.

Arguably, the process is deeply flawed because the A awarded to 8 of the students is more about statistics than those students’ own levels of attainment (“this cohort got these GCSE grades in maths, so they can only have so many of these grades at A levels”). As such, that process violated the fundamental premise of CAGs, which is that teachers know their students best.

Furthermore, a student might be given a low grade at A level maths despite having a high grade at GCSE maths because many OTHER STUDENTS got low grades at GCSE.

Reply

Helen says:

I am assuming the table shows the number of entries for each of the subjects. This could therefore include home schooled students who didn’t get any “results” and are sitting 3 A Levels, resits from last year and those this year who wish to actually sit an exam who could be doing anything from 1 to 3 A Levels. (I know some students do take 4, but they are in the minority). Therefore, the total number of students actually sitting exams is a lot less than 22,020. Any idea of the number of actual students?

Reply

Andrew Harland says:

One hopes the initiative being promoted by the CIEA to push for a more teacher assessment driven model based upon teaching and learning, led by teachers working closely with their students on a day to day basis, will help towards removing this externally promoted flawed system and put the educational experience back at the heart of this sector enhancing the wellbeing and engagement of all concerned.

Reply

Dennis Sherwood says:

Huy – yes, norm referencing makes all sorts of assumptions, one of them being that all cohorts are in essence the same. Which of course they are not. The whole thing is indeed deeply flawed, and needs reform.

Helen – thank you, and you are right that my statement about the “whole cohort of 22,020 candidates” is not correct: there were 22,020 exam entries, but the number of candidates will be fewer in that some will indeed be sitting more than one subject. I think, though, that the number of entries for any one subject, as shown in the table, is likely to be the number of candidates too, for the same candidate will probably not take the same exam more than once. I don’t know how many individual candidates there are, and don’t know how to find out – so perhaps another reader can help. So thanks again for pointing that out, and so politely too!!!

And Andrew – please take the opportunity to tell us more about CIEA…

Reply

Jeremy says:

I’m not sure the number of candidates has been published. But there’s more detail about the types of centres in Ofqual’s official statistics (https://www.gov.uk/government/statistics/entries-for-as-and-a-level-autumn-2020-exam-series) which may help to understand who’s taking exams in the autumn:

Here’s the breakdown of A level entries by centre type:

Secondary non-selective, maintained 4,770

Sixth Form and FE 3,810

Independent 2,045

Selective 815

Other 8,655

(where “Other includes: college of higher education, university department, tutorial college, language school, special school, pupil referral unit (PRU), HM Young Offender Institute (HMYOI), HM Prison, training centre and private candidates. Private candidates include part-time students, distance learners or individuals who are privately tutored but some centres may also classify year 14 students who left the centre at the end of the 2019/20 academic year as private or external for the autumn series despite previously being internal candidates at the same centre.”)

In contrast, here’s the breakdown for summer 2019 (from https://www.gov.uk/government/statistics/provisional-entries-for-gcse-as-and-a-level-summer-2019-exam-series):

Independent 104,655

Non-NCN Classified 30

Secondary non-selective, non-independent 431,610

Selective 30,800

Sixth Form and FE 169,165

Other* 9,330

So “Other” entries in autumn are at 93% of the summer 2019 levels, while (e.g.) “Sixth Form and FE” entries are at 2% of the summer 2019 levels.

Reply

Dennis Sherwood says:

Hi Jeremy – many thanks; that’s most helpful!

Your point about “other” is striking… I wonder how many are students who ‘left the centre’ – or perhaps ‘left’ is a euphemism for ‘have not been funded by’ ?

Reply

simon kaufman says:

Dennis – another very powerful contribution particularly when aligned with the numbers reported by Jeremy showing the distribution by centre type of this autumn’s entries.

The weighting of entries towards the ‘other’ category almost certainly means that a very high proportion of this cohort will be candidates who as private entrants could not be given a CAG back in the summer whilst presumably a significant proportion of the remaining entrants will be students dissatisfied with the outcome of the CAG process and liable to be heavily clustered around a narrow band of attainment – presumably at the A/B and A*/A grade boundaries where this variation is critical to the success of application to high demand subjects within Russell Group universities.

The preponderance of these two cohorts within the autumn entry population as you suggest will generate real problems for Ofqual if they attempt to determine grade boundaries on the same principles as have been applied to summer exams whilst not to do so raises significant issues of comparability to address with DFE if ofqual and the boards comprising jcq recognise that the autumn examination cohort is substantially different in character making application of its standard norm referencing model highly questionable.

As you say we will have to wait to see what Ofqual ‘pulls out of its hat’ as a solution with very little prospect that this will either be ‘fair’ or be capable of sustaining the confidence of the wider education community and all this as ministers predictably ‘double down’ on summer examinations with minimal changes to curriculum coverage and no discernible signs of a ‘Plan B/C’ which could be rolled out if/when the scope of the pandemic requires a rethink on what can safely be delivered further down the line next spring/summer.

Reply

Dennis Sherwood says:

Hi Simon – thank you; yes, indeed.

And the GCSE exams, scheduled for November, are likely to be a big problem too. I haven’t seen any announcement yet of the number of entries, but I imagine that some candidates will be students at the top end, ‘awarded’ CAGs of 7 or 8, but wanting an 8 or a 9, and there will also be many with CAGs of grade 3 in English or Maths, and really want a 4. So the distribution of ability is quite likely to be very different from a normal distribution, perhaps with two ‘humps’.

Also, Dame Glenys Stacey was interviewed on the Today programme yesterday morning, and dropped a hint about possibly using multiple choice “in some subjects” in the summer 2021 exams (if they go ahead!)- see, for example, https://schoolsweek.co.uk/more-optionality-in-next-years-exams-still-a-possibility-says-ofqual-chief/.

I wonder if this is trailing Ofqual’s solution to the grade (un)reliability problem? Certainly, unambiguous multiple choice questions have unambiguous right/wrong answers, and so deliver both ‘accurate’ and ‘reliable’ grades. But there are other consequences… and it would be truly crazy if multiple choice were introduced on the pretext of the necessity of delivering reliable grades – this would truly be the Ofqual bureaucratic tail wagging the educational dog.

There are many other ways of delivering reliable grades (https://www.hepi.ac.uk/2019/07/16/students-will-be-given-more-than-1-5-million-wrong-gcse-as-and-a-level-grades-this-summer-here-are-some-potential-solutions-which-do-you-prefer/) – and, to my mind, multiple choice is one of the bad ones!!! We really need an independent study to review the options: I don’t think Ofqual can be trusted to do this fairly…

Reply

Add comment