Can we assess ‘job quality’ using the Graduate Outcomes survey?

This blog was kindly contributed by Tom Fryer, a PhD researcher at University of Manchester. Tom has written for HEPI before about comprehensive universities and about how to define a graduate outcome. You can find Tom on Twitter @TomFryer4.

HESA’s report from 8 June 2021, Graduate Outcomes: A statistical measure of the design and nature of work, argues that the Graduate Outcomes survey can be used to assess job quality. This has the potential to broaden our focus beyond employment rates and salaries which tend to dominate our current policy discussions. The report outlines how a metric of job quality could be created and recommends ‘a period of engagement … around this statistical measure’ (p.14).

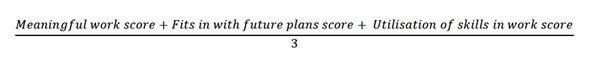

The report proposes making a ‘job quality’ metric from three questions: the extent to which graduates agree that their current work 1) is meaningful, 2) fits with future plans and 3) utilises what they learnt during their studies — all of which use a five-level Likert scale from ‘Strongly disagree’ to ‘Strongly agree’. The metric is formed by converting the answers to numeric values (‘Strongly disagree’ is given a value of 1 and ‘Strongly agree’ is 5) and an average is taken across the three questions. This results in a composite variable, made up of the three questions in equal parts, which ranges in value from 1 to 5.

This use of graduates’ perspectives on their work is a step in the right direction. It represents a move beyond a narrow focus on graduate employment rates and salaries that characterises contemporary policy discussions. Is it not as important to know if graduates are engaged in work they consider meaningful as whether this work is highly skilled or highly paid? At the very least, this fits with the idea that higher education should enrich graduates’ lives, one of the Office for Students’s key aims.

While the motivation behind the report may be sound, there are some issues with the proposed metric. Firstly, the report limits itself to one dimension of job quality, the ‘design and nature of work’, a term taken from the Chartered Institute of Personnel and Development (CIPD). However, the CIPD views job quality as composed of seven dimensions and the Graduate Outcomes survey collects data on many of these dimensions — for example, data on employment contracts would fall under another dimension that the CIPD calls ‘terms of employment’. Perhaps the report’s decision to limit the metric to one dimension of job quality reflects the early stage of the project; however, it would be useful to know if the authors view their proposed metric as working alone or alongside other dimensions of job quality.

Secondly, on a personal note, I do not find the ‘design and nature of work’ to be an intuitive category. While it is easy enough to grasp the meaning of other dimensions of job quality — for example, ‘terms of employment’ is made up of ‘contract type’ and ‘job security’ — I find the ‘design and nature of work’ harder to wrap my head around. Does a job’s meaningfulness, fit with career plans and skill utilisation represent one unified aspect of job quality? A job could be meaningful, but not fit well with future plans or utilise skills; equally, a job could not be particularly meaningful, but is the first step on a career ladder and involve extensive skill use. To me, the three questions composing ‘design and nature of work’ seem relatively separate, which makes their combination into a single metric problematic.

Even if my scepticism is unwarranted, there is a third problem with the metric as it is currently proposed. It has a muddled purpose — it cannot decide if it wants to describe the job quality of graduates or assess the impact of higher education on the job quality of graduates. This arises because the metric combines two different things into a single variable: 1) ‘graduate functionings’ that describe what graduates do in the world; and 2) ‘graduate outcomes’ that consider the causal impact of higher education. This terminology is introduced in more detail in my recent HEPI blog, as well as in this paper (also available here for those without institutional access).

The first two questions on ‘meaningfulness’ and ‘fit with future plans’ in the ‘job quality’ metric are graduate functionings that offer descriptions of how graduates function in the world. This enables us to answer descriptive questions such as: do graduates tend to have jobs that they consider meaningful? The crucial point is that these functionings tell us nothing about the causal impact of higher education. If we find that 60% of graduates have a job that they consider meaningful, we do not know anything about the causes that underlie graduates’ ability to access these meaningful jobs—these causes could be related to HE, but equally they could be completely unrelated.

In contrast, the third question in the ‘job quality’ metric is concerned with the causal impact of higher education. This question tries to gain information about how graduates perceive the impact of higher education on job quality, by asking if they are utilising what they learnt ‘during their studies’. While a descriptive question might ask: ‘To what extent are you utilising your skills and knowledge?’, the actual question in the survey is only interested in the utilisation of skills and knowledge that stems from higher education. This does not produce a descriptive graduate functioning (a description of skill and knowledge utilisation in the workplace), but rather graduates’ perceptions of a graduate outcome (an assessment of how the skills and knowledge learnt during HE are utilised in the workplace).

Unfortunately, this mixing of graduate functionings and graduate outcomes into a single metric makes it uninterpretable. A low score could indicate a low-quality job, but it could also indicate a high-quality job that happens to involve little utilisation of things learnt during higher education. A Maths graduate in a communications role might report that they are not using skills related to their higher education experience, but this does not mean this is low-quality work. By mixing graduate functionings and graduate outcomes the proposed metric provides neither a description of job quality nor an indication of how higher education has impacted this job quality.

Using the Graduate Outcomes survey to assess job quality has potential and may help us to move beyond a narrow focus on employment rates and salaries. However, the job quality metric proposed in HESA’s report has some problems. It focuses on only one dimension of job quality, a dimension that I do not find particularly intuitive, and the metric has a slightly muddled purpose that makes it challenging to interpret.

If we move forward with this task, we must distinguish between graduate functionings and graduate outcomes, between descriptions of what graduates do and assessments of HE’s impact on graduates. We should also recognise that while the Graduate Outcomes Survey may be well suited to offering descriptions of graduates’ job quality (a graduate functioning), if we want to understand the impact of higher education on graduates’ job quality (a graduate outcome) it will be necessary to move beyond this survey data.

This causal assessment of higher education’s impact requires us to build upon survey data by conducting nuanced research on how particular institutions and courses influence job quality and how this varies for different graduates in a range of different employment sectors.

Comments

Sarah says:

Thank you for this Tom – a really clear and concise explanation of both the proposed metric and its shortcomings.

Reply

Add comment