How reliable are exam grades?

- This blog was kindly provided by Mary Curnock Cook CBE. Mary has long experience in exams and assessments including during her time as a Director at the Qualifications & Curriculum Authority in the early 2000s. She is also a Trustee of HEPI. You can find Mary on Twitter/X @MaryCurnockCook.

- Sign up for our free webinar with UCAS Chief Executive Clare Marchant, taking place this morning at 11am.

In this blog, I want to provide some context and challenge to two erroneous statements that are made about exam grades:

- That ‘one in four exam grades is wrong’

- That grades are only reliable to ‘within one grade either way’

For teenagers, exam results days mark major milestones. For most GCSE, A level and BTEC students, the grades they get awarded this summer represent a passport which will either provide open visas to all sorts of opportunities or, in some cases, will restrict their movement up the ladder of opportunity. For admissions professionals in universities, A level results day is critical in determining the number and ‘quality’ of students admitted and the impact this has on a university’s finances and league table rankings. In short, grades are important. It is therefore vital that grades are reliable and trusted by all those who use them – students, universities and colleges, and employers.

The ‘one in four grades is wrong’ claim – a gross misunderstanding

The claim that one in four grades is wrong is derived from a 2018 Ofqual research report on Marking Consistency Metrics. This report uses two descriptors for marks awarded – ‘definitive’ marks and ‘legitimate’ marks. A ‘definitive mark’ is described by Ofqual as ‘the terminology ordinarily used in exam boards for the mark given by the senior examiners at item [question] level’. Such marks are seeded into the mass marking of exam questions to ensure that no markers are routinely marking too leniently or too harshly. In other words, the ‘definitive mark’ is a proxy ‘correct’ mark used for quality assurance processes only.

The probability of being awarded a ‘definitive’ grade at qualification level varies from almost 100% for subjects like mathematics, to nearer 50% for the more subjective judgements used in subjects like English or History. If you were to weight these probabilities across subjects to derive an average, you might arrive at a probability of roughly one in four marks not being the ‘definitive’ mark. Critics have extrapolated this to the idea that one in four grades must therefore be wrong, which is a gross misrepresentation of the position, not least because no-one seriously advocates for an assessment regime where there are only correct or incorrect answers to all exam questions.

It is therefore important to understand the concept of a ‘legitimate’ mark which arises because our system uses a variety of assessment approaches for different subjects to ensure that each exam is a valid way of assessing what we expect the candidate to know and be able to do in a particular subject. In many subjects there will be several marks either side of the definitive mark that are equally legitimate. They reflect the reality that even the most expert and experienced examiners in a subject will not always agree on the precise number of marks that an essay or longer answer is worth. But those different marks are not ‘wrong’.

Mathematics assessment, for example, is very reliable at the ‘definitive’ level because there is more likely to be an objectively correct answer to many questions. In contrast, English is less reliable at the definitive level because of the more frequent (and desirable) use of longer, extended response questions to which there is no objectively ‘correct’ answer. As Ofqual’s paper says: “To have one benchmark against which all components from subjects are compared would be a very blunt tool for comparison,” adding that it would “neither set a standard for marking consistency that was achievable […] nor work to improve components in those subjects.” The ‘definitive’ mark was used in Ofqual’s paper because those were the only marks captured by the exam boards that were suitable for this kind of statistical analysis.

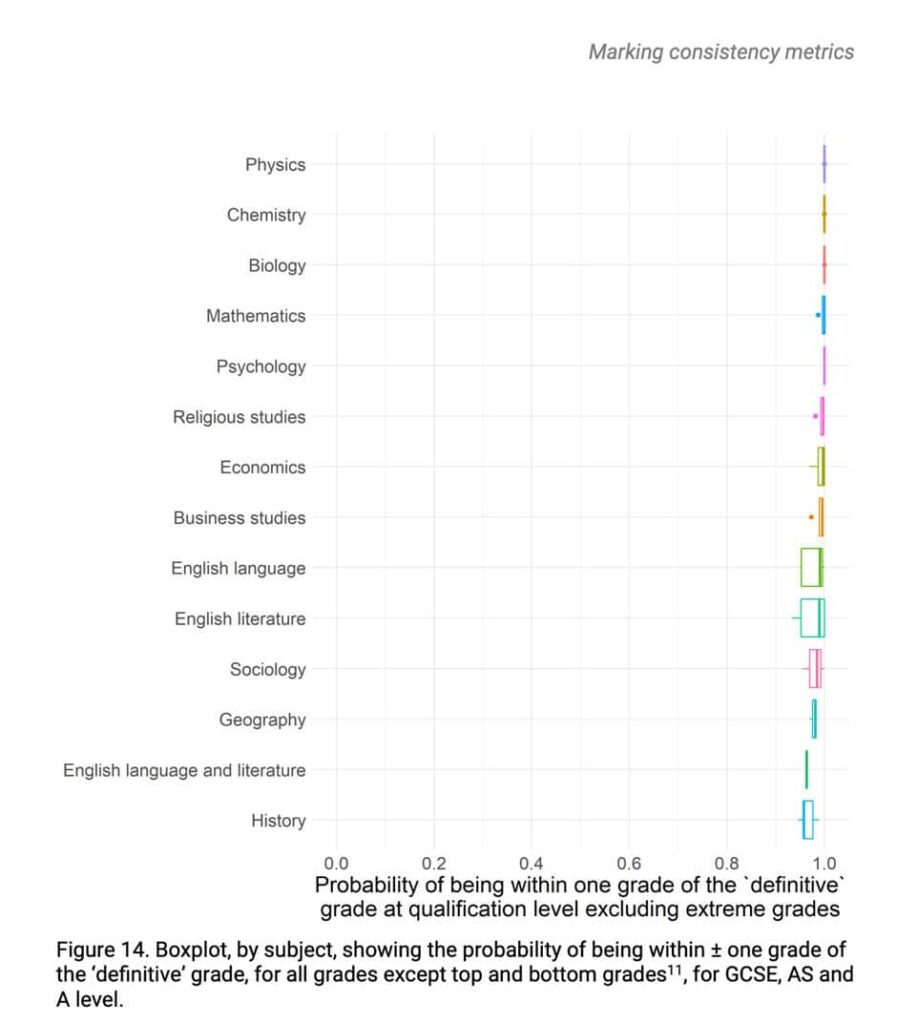

We can all accept that there is inherent imprecision in many assessments, including in Higher Education. For this reason, and using the internationally accepted benchmarks for reliability of assessments, regulators use the concept of a legitimate grade, defined as 95% or more grades being within one grade either side of the ‘definitive’ grade. Ofqual’s analysis shows that “the probability of being awarded a grade within one grade of the definitive grade is 1 or nearly 1 (ie 100% or near 100% probability) for nearly all subjects”, as shown in Figure 14 from their report below.

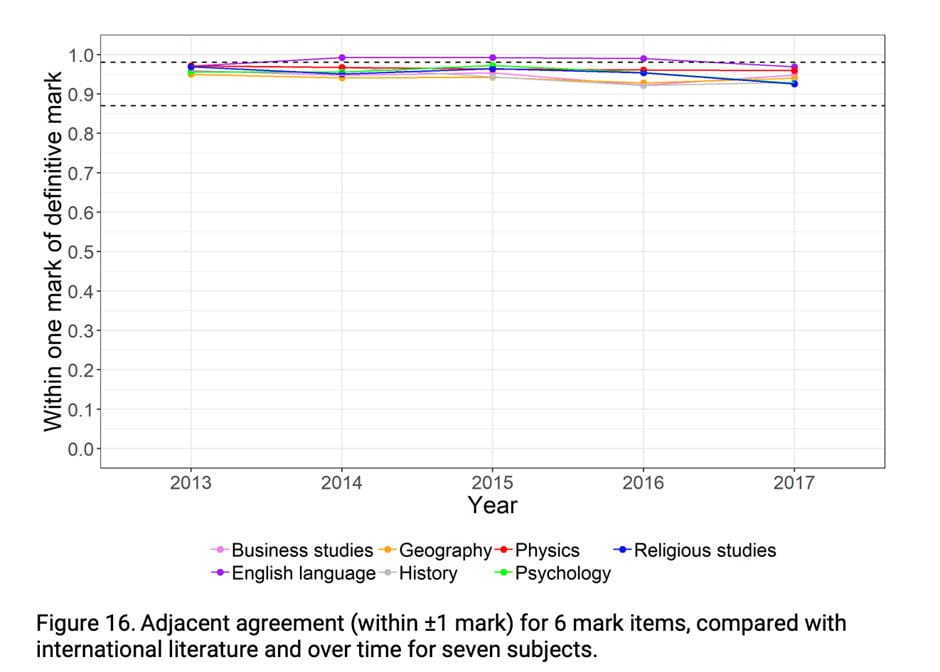

Ofqual’s analysis (see Fig 16 below) shows that our exam system has been able to mark reliably within this internationally accepted definition very consistently over time. We can therefore count our system as amongst the best in the world for reliable assessment.

The ‘reliable to one grade either way’ claim misunderstands the context

This internationally accepted benchmark for exam marking reliability is the context for the former Ofqual Chief Regulator’s comment at an Education Select Committee hearing after the summer exams in 2020 that grades are “reliable to one grade either way” (Q1059). Some commentators have chosen to weaponise this statement in a way that shows poor understanding of the concepts underpinning reliable and valid assessment and risks doing immense damage to students and to public confidence in our exam system.

Public confidence and trust in our exam system is vital for a functioning education system and to support students to progress to further learning or employment. This was sadly confirmed during the two years of the pandemic when exams where cancelled and replaced by teacher assessed grades. During the UPP Foundation Student Futures Commission which I chaired in 2021, I was dismayed to hear students describe themselves as the ‘cohort with the fake grades’. My own research in 2021 highlighted an unexplained difference between the teacher-assessed A level grades for boys and girls, and numerous press articles argued about perceived unfairness between different schools, different pupil characteristics, and different contexts during the years when exams were cancelled. Only a national exam system with a standardised and quality-assured marking system that meets international benchmarks can put assessment on a level playing field for all students.

Without such a national system of assessment, we would be unable to run a fair university admissions process, and visibility of attainment gaps between different groups of students would disappear with the consequent risks to social justice and social mobility.

If it were true that one in four exams grades is ‘wrong’, there would be a national outrage. Instead, the vast majority of grades are accepted as legitimate with only a tiny proportion contested. Last year out of a total of 846,885 AS and A level grades awarded in England, only 9,910 grades were changed following administrative errors, reviews of marking or reviews of moderation, according to Ofqual. This represents just over 1%.

Since schools have visibility of exam scripts and marks after grades are awarded, they have full access to their pupils’ performance in the exam and the way this was judged by markers. Schools and colleges can request a review of marking if they think there is an error in the marking, and if they are not satisfied with the outcome of that, they can appeal on a number of grounds, including academic judgement.

Take a typical sixth form of 200 A level candidates, each taking three A levels, thus 600 grades awarded. If there really were one in four or 150 ‘wrong’ grades awarded in each school like this, I have no doubt that the number of challenges would explode, regardless of the cost. Instead, the reality is that 99% of students, their teachers and their parents, with access to their exam scripts and marks as evidence, accept their grades as a legitimate measure of their performance.

Those who question their grades are often those students whose marks put them at the borderline between grade boundaries. The reason that so few are regraded on appeal is that a student’s marks are derived from often multiple components (papers) and multiple individual items (questions) within those components. Since the majority of exams are marked entirely anonymously at item or question level (as opposed to markers marking whole scripts from each student) the chances of harshness or leniency are likely to be levelled out at each stage of aggregation of marks – which is why qualification level grades are more reliable than component level or individual item marks.

Some have called for individual marks to be stated on exam certificates alongside a confidence interval, but assessment outcomes expressed at the level of marks rather than grades simply create more cliff edges and borderlines for students – a particular challenge for highly competitive university admissions. There are enough criticisms of ‘teaching to the test’ already without adding summers filled with mark-harvesting. Broad grade widths are always going to be more reliable than marks, and users accept that a grade is a legitimate categorisation of what a student knows, understands and can do in respect of a particular specification, domain, or subject.

We know this because it works. Students get their grades and are able to use them effectively to support their progression to further learning, training, or employment. Universities have ample evidence about the relative success rates for students with different grades and continue to be confident in setting their course entry requirements accordingly.

None of this is to say that exam grading reliability cannot be improved. Good assessment design is essential, as is markers’ use of the full range of marks for a given question. The rate of ‘seeding’ control items into markers’ caseload and the tolerances allowed by the exam boards also contribute to the quality of marking. Mass double marking, currently unaffordable in terms of time and financial constraints, could become standard using artificial intelligence engines to provide effective, unbiased second marking quality assurance for millions of items at a fraction of the cost.

I would also not wish my confidence in the marking of the current exams to be misconstrued as unvarnished support for our current schools’ assessment approach. I’ve been encouraged to read of Ofqual chief regulator Dr Jo Saxton’s openness to moving towards more digitally-enabled approaches which would support both innovation in assessment methodology and superior data to use in further improvements to reliability and validity of assessment.

The assertion that ‘one in four school exam grades is wrong’ is a gross misrepresentation of reality and represents a naïve understanding of assessment. No one wants an assessment regime that reduces everything assessed to ‘right’ and ‘wrong’ answers and that’s why stakeholders in the education and employment ecosystem continue to accept that legitimate grades really are legitimate and represent meaningful currency for progression to further learning or employment for the vast majority of students.

Comments

Jeremy says:

There are some good points here, but it goes too far in places. For example:

> Instead, the reality is that 99% of students, their teachers and their parents, with access to their exam scripts and marks as evidence, accept their grades as a legitimate measure of their performance.

The idea that “99%” of students/parents/teachers “accept their grades” is not supported by the evidence, since around 5-6% of grades are challenged each year.

Alarmingly, the same evidence also shows that challenging more grades leads to many more grade changes: FE colleges challenge 5% of grades and see 13% of those changed, while independent schools challenge 10% of A level grades and see 18% of those changed. So the fact that relatively small numbers of grades are changed each year doesn’t show that assessment in the UK is especially reliable; it shows that state schools are letting their pupils down by not challenging anywhere near as many grades as they ought to.

Reply

Alice Prochaska says:

I had senior experience, though now retired for several years, at both Yale and Oxford, including assessments for competitive international scholarships. Examinations and grading at those two universities are very different, with far more emphasis on continuous assessment in the American system. I have long been concerned about the distorting effects of the examination system on individuals’ final outcomes. This article is reassuring about the grading system, and I strongly agree confidence in that is vital. But I would like to see more about the impact of examination systems themselves on students’ learning and self-confidence. With more “subjective” subjects such as English and History especially there are several elements (including simple luck, e.g. in the available choice of questions) that determine individuals’ outcomes. Fear of exams plus concern about their fairness are not confined to questions about the objectivity of the grading system. I’d like to see his very helpful article followed by something on the research into exams’ impact on learning and confidence beyond the grading system.

Reply

Dennis Sherwood says:

Thank you for confirming that ‘grades are important’, and that ‘it is vital that grades are trusted by all those who use them’. Thank you too for confirming that ‘in many subjects there will be several marks either side of the definitive mark that are equally legitimate’, none of which are ‘wrong’.

With that in mind, let me table the possibility that a GSCE English script might be given 35, 36 or 37 marks, all of which are, as you state, ‘legitimate’, and none ‘wrong’.

If, after all the marking has been done, grade 4 is defined as ‘all marks from 35 to 40 inclusive’, then the candidate is awarded grade 4 no matter which of these marks is given.

However, if the 3/4 boundary is 35/36, then a mark of 35 results in a certificate showing grade 3, whereas marks of 35 and 36 result in grade 4. The marks are all legitimate, but the grades are different. Materially so, especially for GCSE English, for which a grade 3 interrupts progression, requires the candidate to re-sit, and can cause who-knows-what damage to a student’s mental health.

This is surely grading-by-lottery, for it is a matter of chance as to whether the script is marked 35, 36 or 37; a chance which possibly determines the destiny of a 16 year old. Or rather about 35,000 16 year-olds, for that is my estimate of the number of students likely to be awarded grade 3, fail, in GCSE English on 24 August, but who might have been awarded grade 4, had the dice fallen another way.

I chose those marks – 35, 36 and 37 – deliberately. They are used as an example by Ofqual’s Chief Regulator, Dr Jo Saxton, in an interview with the distinguished educational journalist and commentator, Laura McInerney – it’s on YouTube, https://www.youtube.com/watch?v=H5m-kMn8eHg, and those numbers are at about 9:06. And indeed I too commend Dr Saxton’s openness, for she then goes on to say ‘On that basis, more than one grade could well be a legitimate reflection of the student’s performance’.

Ah. The magic word. ‘Legitimate’. Indeed.

So both grade 3 and grade 4 are ‘legitimate reflections of the student’s performance’.

That would be fine if both grades were declared. But the certificate shows only the single grade. Great if it’s the 4. And is it just too bad if it happens to be the 3? Just too bad to lose a year of development? To have to re-sit? To carry the stigma of ‘I failed GCSE English’?

If ‘more than one grade is a legitimate reflection’, why is only one grade declared? What other grades are also ‘legitimate’? And what is their ‘legitimacy’?

The ‘more than one grade’ statement appears to me to be totally consistent with the definition of ‘legitimacy’ as ‘95% or more grades being within one grade of the ‘definitive’ grade’. Leaving aside the fate of candidates awarded any of the 5% of grades that are at least two grades adrift (of which there are soon to about 300,000), it seems to me that this statement is both vague and unhelpful.

If 95% of grades are within one grade of the ‘definitive’ grade, are 94.9% spot on, and 0.1% one grade adrift? Or are 0.1% spot-on, and 94.9% one grade adrift?

To me, that’s an important question, for to a Med School applicant, holding an offer of AAA but awarded AAB, that one grade makes all the difference in the world.

Which takes me to Dame Glenys Stacey’s statement that ‘grades are reliable to one grade either way’. Is this too not, in essence, the same thing?

So thank you for verifying that ‘more than one grade could well be a legitimate reflection of a student’s performance’. And thank you too for confirming there is a ‘probability [of] roughly one in four grades (not marks – that must have been a slip of the pen) not being the ‘definitive’ grade (oh dear, that slip again)’.

May I ask what adjective you would use to describe a grade that was not ‘definitive’ – or, to use Ofqual’s synonym ‘true’ (as on page 20 of the original Marking Consistency Metrics report of November 2016, https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/681625/Marking_consistency_metrics_-_November_2016.pdf, and also on page 21 of Ofqual’s 2015 Report to Parliament, https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/518953/2016-04-26-report-to-parliament-1-january-31st-december-2015.pdf)?

It strikes me that, if there is a benchmark of the ‘definitive’/ ‘true’ grade, then any grade that isn’t ‘definitive’/ ‘true’ is, in simple language, wrong, as discussed in detail in my HEPI blog of 15 January 2019.

I am also somewhat surprised at the argument I paraphrase as ‘things are in good shape because only 1% of grades are changed as the result of a challenge’, with, presumably, the hope that the reader will infer that the other 99% are OK.

May I point out that this argument was discredited in a blog posted to Ofqual’s website on 29 September 2014, written by Ofqual’s then Chief Regulator Glenys Stacey, as she was at that time (https://ofqual.blog.gov.uk/2014/09/29/work-ensure-best-quality-marking/).

This argument is, of course, still widely used, most recently by Sharon Hague, Senior Vice President, Pearson School Qualifications, at the hearing of the House of Lords Education for 11-16 Year Olds Committee (Q20, https://committees.parliament.uk/oralevidence/12996/pdf/), and the (false) explicit statement that ‘99.2% of our grades were accurate on results day’ is still to be found on the Pearson/Edexcel website (https://qualifications.pearson.com/content/dam/pdf/Support/services/Exam_marks_accuracy_infographic_160118.pdf).

That figure of 99.2% is indeed a puzzle. How is it possible for Pearson’s grades to be ‘99.2% accurate’ when Ofqual state that ‘95% of grades are within one grade of the definitive grade’, which to me implies that 5% of grades are at least two grades adrift?

But perhaps I just don’t understand.

Anyway, may I conclude by thanking you for confirming that exam grades are indeed unreliable.

Reply

Dennis Sherwood says:

“Are grades reliable to one grade either way or not?” is an important question, and was left as a tantalising cliff-hanger at the end of the July 13 hearing of the Lords Education for 11 – 16 Year Olds Committee – see about 12:23:23 on https://parliamentlive.tv/Event/Index/67100d6e-e98f-4a32-be23-2e49f318ad42.

So it’s very valuable that Mary Curnock Cook has articulated the ‘things are fine’ case so vividly.

I, of course, am on the ‘it hurts’ side. But it’s crucial that readers make up their own minds by reference to the original sources, which, I believe, are these.

Ofqual’s measures of grade reliability at ‘qualification’ level are not those shown in the Figures of this blog; rather, they are shown in Figure 12 on page 21 of Ofqual’s November 2018 report, Marking Consistency Metrics, https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/759207/Marking_consistency_metrics_-_an_update_-_FINAL64492.pdf. Measures of grade reliability at ‘unit level’ will be found in Figure 14 on page 25 of Ofqual’s November 2016 report, Marking Consistency Metrics, https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/681625/Marking_consistency_metrics_-_November_2016.pdf.

The first discussion of these results was, I believe, the article in Schools Week, dated 14 November 2016, https://schoolsweek.co.uk/half-of-english-literature-exam-papers-not-given-correct-grade-ofqual-report-finds/, and the first use of the contentious words ‘1 grade in 4 is wrong’ is in my paired HEPI blogs of 15 January and 25 February 2019, https://www.hepi.ac.uk/2019/01/15/1-school-exam-grade-in-4-is-wrong-does-this-matter/ and https://www.hepi.ac.uk/2019/02/25/1-school-exam-grade-in-4-is-wrong-thats-the-good-news/.

Dame Glenys Stacey’s statement that grades are ‘reliable to one grade either way’ can be heard, in context, just after 12:19:47 on https://parliamentlive.tv/Event/Index/a3d523ca-09fc-49a5-84e3-d50c3a3bcbe3; it’s ‘weaponisation’ is most explicitly discussed in this blog on the Rethinking Assessment website, https://rethinkingassessment.com/rethinking-blogs/just-how-reliable-are-exam-grades/.

The demonstration of the (very near) equivalence of grades ‘are reliable to one grade either way’ and ‘1 grade in 4 is wrong’ is based on some simulations I have done; as I stated in the blog, I am very happy for my simulations to be scrutinised.

Reply

Huy Duong says:

Overall it seems to me that a lot of this is simply playing with words. The author seems to be saying that, “It’s wrong for some commentators to say that 1 in 4 grades are wrong, and reason is the establishment is defining ‘wrong’ to mean so and so, and ‘right’ to mean so and so. According to these definitions one cannot say that 1 in 4 grades are wrong”.

However, whichever definitions of ‘wrong’ and ‘rights’ the establishment chooses to use, it is irrefutable that students are subjected to a grade lottery. The same script might be given a mark of 25 or 30, and, therefore, might get a pass or a fail, depending on which examiner happens to mark it.

The establishment and the author take the view that both the pass and the fail are legitimate.

However, is is fair to the student? Do they deserve better? Have they been told that they are subjected to this grade lottery? How well do users of exam grades know that the grades they are looking at are the result of a lottery, where the same script might be legitimately given either a pass or a fail depending on which examiner happens to mark it?

Reply

Huy Duong says:

If, as the author and the establishment contend, for a given script, both “Pass” and “Fail” are equally legitimate, then for the student’s certificate to state only either “Pass” or “Fail”, that certificate is stating a half truth. As the saying goes, half a loaf of bread is bread, but half the truth is a lie. How many students’ life chances have been put in jeopardy because their certificates state half truths?

Reply

Huy Duong says:

The author mentions, “During the UPP Foundation Student Futures Commission which I chaired in 2021, I was dismayed to hear students describe themselves as the ‘cohort with the fake grades’.”

It is very sad that there are students this way (which I think is too hard on themselves). This is entirely due to Ofqual’s incompetence, arrogance, lack of wisdom and care. With all its experts and researchers, Ofqual should have known that grading by absurd algorithm is different from grading by exam and grading by TAG is different by grading by exam. If it didn’t know, it should have listened to others people.

In science, as in life, if two things are different, you don’t try to pass them off as the same, that would be dishonesty. You don’t put a the label “Apple, large” on an orange, but that is what Ofqual did.

If Ofqual had used a different set of labels for grades that were going to come from their absurd algorithm and grades from TAGs, no student would feel that their grades are fake and the country wouldn’t have had to worry about grade inflation and deflation.

Reply

Huy Duong says:

Regarding this statement, “Instead, the reality is that 99% of students, their teachers and their parents, with access to their exam scripts and marks as evidence, accept their grades as a legitimate measure of their performance.”, I have just looked up AQA’s website, which states that students who are not private candidates and their parents are not allowed to appeal, they have to talk to their centres, and only heads of centres can appeal, paying over £100 for the first stage and over £200 for the second stage of appeal. Given this, we can conclude that 99% of students and their parents accept their grades as much as we can conclude that 90% of the population of a country accept their authoritarian government. I don’t think it’s a correct conclusion.

Reply

Add comment