Two and a half cheers for Ofqual’s ‘standardisation model’ for GCSE, AS and A level grades – so long as schools comply

This blog has been kindly written for HEPI by Dennis Sherwood of Silver Bullet Machine, who has been tracking the state of this year’s public exams for HEPI.

On Friday, Ofqual announced the key principles that will be used to ensure this year’s GCSE, AS and A level grades will be ‘as fair as they can be’. Since there will be no exams, for each subject:

- Each school has to submit, to the appropriate exam board, their suggested ‘centre assessment grade’ for each candidate, and also the rank order of candidates.

- To ensure that grading is fair across the country, the board will then apply a ‘standardisation model’ to compare the submitted centre assessment grades to historical data relating to the actual grades awarded to that school’s candidates in 2017, 2018 and 2019 for A level, and, for GCSE, those years in which the exams have been graded 9, 8, 7….

- If a school’s centre assessment grades are different from those resulting from the ‘standardisation model’, some or all of the grades will be adjusted before being issued, but the submitted rank order will not be changed.

- Overall, the board will make sure that, at a national level, grade distributions are broadly in line with previous years.

To me, this all makes good sense. The rules are simple. There are no ‘behind-the-scenes’ statistics and the process can be replicated at every school. So teachers can have confidence that their centre assessment grades, submitted in compliance with their historical averages, will have a high likelihood of being confirmed rather than over-ruled. A measure of success of the process is therefore the ratio of confirmed centre assessment grades to the total number submitted, as determined for each school, each subject, each board and overall. The closer this number is to unity, the better.

Ofqual’s key objective is to prevent grade inflation – which is what the fourth bullet point is all about. To achieve that, the distribution of grades for each subject within each school must be the same as the average over recent years, hence the ‘standardisation model’. If this is the case for each school, then the aggregate will work too.

Subject to one nasty problem, as illustrated in this table, which shows the numbers of candidates awarded grade A* in six different schools over each of the last three years:

The total number of A*s awarded each year was 58, 62 and 60, and so from the board’s point of view, ‘no grade inflation’ means about 60 awards this year, and certainly not more than 62.

Each school has an average of 10, and a range of ± 2. If each school submits 10 this year, the sum is 60, which is fine.

But suppose each school says, ‘This has been a good year, and we think 11 students merit an A*. We’d really like to go for 12, but we appreciate that’s pushing the boat out; 11 should be safe.’

So the six schools each submit 11 candidates, giving a total of 66. Even though each school has behaved reasonably, grade inflation occurs. The board must intervene.

But how? Does the board go to each school and say ‘please explain?’, in response to which the school will indeed explain.

School C, for example, might claim that their data shows they have been on an improvement path, and so their 11 grade A*s are justified. But by the same token, if school C is improving, school D is declining. Should they be awarded only six?

Every school will have a reason why ‘we are a special case’, and these will be impossible to judge fairly. So to me, the most sensible option is for the board to apply exactly the same rule in exactly the same way to everyone, and reduce each school’s number to 10. That’s why the boards need the rank orders, so they can down-grade the lowest-ranked students.

To prevent grade inflation, for every school that submits 11, another has to submit 9. Which just won’t happen. So it’s in everyone’s interests to submit the average, 10.

But what about poor Isaac at school G? He is particularly gifted at Physics, and his school recommends him for an A*, even though the school has never achieved above grade B for years. The submission on behalf of Isaac will easily be identified as an outlier and so is quite likely to be disallowed. Isaac, however, will not be consulted; nor will his teacher. So Isaac will be awarded grade B, consistent with his place at the top of the rank order. He will be a victim, and his school too, for this year’s process traps all schools as prisoners of their pasts.

But before we weep too much on Isaac’s behalf, let us remember that Isaac is just one of the huge number of people disadvantaged (to say the very least) by this most pernicious virus, and although this is a pity, many people have suffered far more gravely, and without recourse to the autumn exam at which Isaac can prove his A*++.

And although Isaac is indeed a victim this year, let us not forget the 750,000 annual victims of the exam system in England – those who, in each of the last several years, were awarded a grade lower than they merited. Neither they, nor anyone else, knows this has happened, so they truly are victims of an unseen, unreported and unpunished injustice.

Despite these problems, I still believe that this year’s grades, resulting from the rank orders submitted on the basis of teacher judgement, will be fairer than those based on the rank orders determined by exam marks.

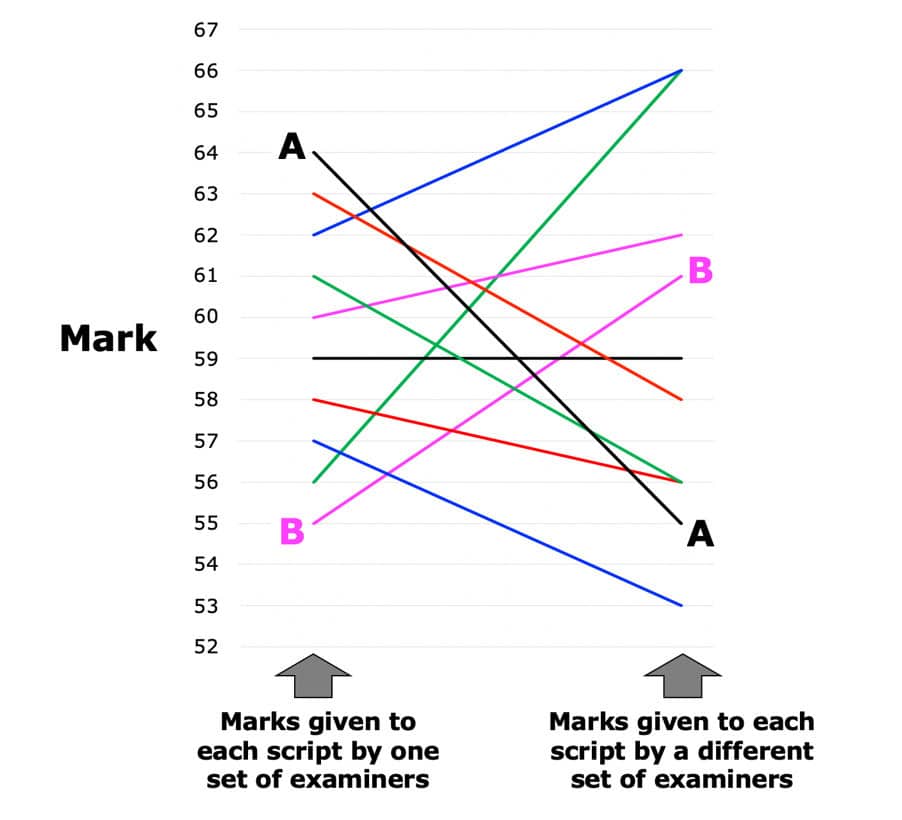

To illustrate this, here is a chart from my simulation of the marking and grading of 2019 A level Economics:

Suppose ten students from the same school take the exam, and each receives marks from 55 to 64 inclusive, as shown on the left. Candidate A is given the highest mark; candidate B the lowest. On the right are the results of my simulation of what happens when each script is marked by a different examiner, with all examiners, in both cases, drawn from the same pool. As can be seen, most of the marks are different: not because marking is sloppy, but because marking is ‘fuzzy’ – or, to use Ofqual’s words, because ‘it is possible for two examiners to give different but appropriate marks to the same answer’.

‘it is possible for two examiners to give different but appropriate marks to the same answer’

The consequences of fuzziness are dramatic. Candidate B is now ranked fourth, higher than candidate A, who is no longer ranked first, but ninth. Much else has changed too.

If all marks from 53 to 66 fall within the same grade width, then these changes in rank order do not matter. But if there are any grade boundaries within this range, the grades corresponding to the marks on the left are highly unreliable. Which is one of the explanations behind Ofqual’s infamous statement that ‘more than one grade could well be a legitimate reflection of a student’s performance’.

‘more than one grade could well be a legitimate reflection of a student’s performance’

The rank orders used to determine GCSE, AS and A level grades have been unreliable for about a decade. Surely the rank orders based on teacher assessments will be more reliable, and the resulting grades therefore fairer.

And even if poor Isaac is a victim of this year’s process, there will be far fewer victims than in previous years. That’s why this year’s process is very likely to give fairer results than hitherto – and more trusted too, especially if all schools submit centre assessment grades that comply with the rules, so that the ratio of confirmed grades to the number of grades submitted is nearly one.

Comments

Kieran McLaughlin says:

Fascinating as ever

Reply

Charles Ben-Nathan says:

I think there is a flaw in the conclusion that the teacher assessment will only be more trusted if grades fall in line with previous years.

As teachers we are best placed to know how students will perform and the levels of the understanding of their subject. That said, all teachers will know that on results day they are often surprised by those students who over perform and those who under-perform. This is impossible to account for with CAGs and rankings. By the very definition of the words “over perform” and “under-perform” these are deviations from expectation. We can’t expect the unexpected, at least not so precisely. Furthermore, it is much easier to under-perform than over perform.

For a student to make an unexpected leap up a grade may be evidence of some last minute hard work that teachers were unaware of, of elements of the subject suddenly clicking, and of course some marginal pupils will tip themselves over a boundary. There is also the randomness of marking that you do allude to. In terms of the boundary pupils, some marginal pupils tip themselves the other way, and also the vagaries of marking will push some pupils below their ‘true scores’. However, I suspect that in terms of exam day performance there is really only net downward pressure. Pupils are much more likely to go into an exam and forget crucial information they know than have information arrive in their minds that they never learnt. Similarly, they are more likely to make mistakes with technique than have a lucky guess, or the perfect paragraph appear.

This means that exam grades and results generally are always lower than they ‘should’ be and what students deserve based on their proven ability in the subject. I would disagree with your conclusion and say rather than a modest increase in grades discrediting teacher assessment, a maintenance of the grades would discredit teacher assessment which would be then seen as falling into line with and condoning a very flawed exam system.

Reply

Rob Cuthbert says:

Who will be the one to tell Isaac: “you’re suffering unfairly but most people aren’t, so that’s OK”! The difference here is that unfairness is a direct consequence of the system adopted, not an unintended byproduct of fuzzy marking. As I understand it there is no appeal against anything but arithmetical or similar errors in the process. The standardisation method itself cannot be criticised, and it is designed to deliver a similar profile of results overall, not to be fair to individuals. Penalisation of schools/centres with poor track records is therefore baked into the method, and there is no recourse other than an Autumn ‘resit’ – which probably imposes a gap year for all who wish to resit, with inevitable financial and academic penalties. Is planned unfairness better than accidental unfairness in a flawed system? It might take a judicial review to find the answer.

Reply

Dennis Sherwood says:

Hi Rob – you raise very valid, and important points, in a most articulate way! And I agree with you about the unfairness to Isaac.

But I don’t think I agree that the unfairness of exam marking is an “unintended by product”. It may not have been deliberately intended at the outset, like some form of cruel punishment. Indeed, that is not the case, for in the ‘old days’, great care was taken over candidates close to boundaries. That has long since gone for school exams, and the continued existence of the problem is to me not an “unintended byproduct” but cast-iron evidence of a deliberate policy not to solve it.

Ofqual’s announcement of 11 August 2019 states “…more than one grade could well be a legitimate reflection of a student’s performance…”, preceded by “This is not new, the issue has existed as long as qualifications have been marked and graded.”

So the unfairness attributable to fuzzy marking is known to have deleterious consequences, and has been known for a long time. But nothing has been done to fix it. Which is not difficult to do – see, for example, https://www.hepi.ac.uk/2019/07/16/students-will-be-given-more-than-1-5-million-wrong-gcse-as-and-a-level-grades-this-summer-here-are-some-potential-solutions-which-do-you-prefer/.

I certainly don’t think that this year’s process will be ‘perfect’: I just hope that it will result in fairer outcomes than the past, which it might and can. In England at any rate – what’s happening in Scotland seems to me to be nuts (https://www.tes.com/news/its-impossible-meet-sqa-grading-demands).

I also hope that fairer outcomes this year will enable an exploration of an overall better way of doing things. A better way that makes sure all Isaacs are never victims again.

Reply

Janet Hunter says:

Hello Dennis,

Thank you for an interesting read. I came across your article, after reading the government document outlining how GCSE and A Level grades will be calculated, as a parent of a Year 13 outlier, looking for an explanation of what the standardisation process might mean for such pupils. Your article was clear and helpful but did not do much to dispel my fear that 13 August might not be the day of celebration we were looking forward to 3 months ago.

I have a couple of observations I would like to address here and would welcome your thoughts.

Firstly, looking at the example you have given here, there are 6 schools who regularly have around 10 students achieving A* in Physics and one school that does well to achieve a top grade of B. Isaac, being the student who doesn’t fit the statistical model of his school is bumped down to a B to avoid overall grade inflation. My first question then is what happens to the grades of the other students taking Physics in his school? If the next pupil in the rankings was teacher assessed as a grade B, surely knocking two grade levels off Isaac’s assessment will also have to impact on the grade for the second pupil, since as the teacher assessment had them two grades apart, it would make no sense for them to end up with the same grade. Any grade reduction for outliers would therefore also end up disadvantaging other students in that school.

Secondly, your explanation of fuzzy marking suggests that one in every four grades in a normal year is wrong. This might be advantageous or disadvantageous to an individual student. If A Level students on average take 3 subjects, it might not affect every student and for students who are affected, is likely to only affect one subject.

However in your example of Isaac above, whose assessed A star grade is bumped down because it does not fit the historical school statistics, the underlying assumption is that his school does not have pupils of Isaac’s ability (so the assessment must be wrong) and/or the teachers are not able to teach to an A star level. But let’s assume that Isaac is an A star Physics pupil. The chances are that he works to a high level and is an outlier in his other subjects too. Therefore, unless the school just has a particularly poor Physics department, there is a high probability that he will be marked down in his other subjects as well. Let’s assume that he has been predicted A* in Physics and As in his other subjects and has firm and conditional offers at Universities based on these predicted grades. In a normal year he might be unlucky and receive a lower grade in one subject, giving him either AAA or A*AB. This will be disappointing but unlikely to be a disaster. This year however, due to his school statistics, and other, more successful, schools bumping up their numbers slightly, he loses a grade in every one of his subjects. He now has ABB and his university choices look a lot more shaky, as does his self-confidence, all because he went to a school with a comparatively lower historical level of achievement. That’s a bit more problematic than inconsistent marking, as it embeds inequality between different institutions. I truly hope that does not happen this summer.

Reply

Dennis Sherwood says:

Hi Janet

Thank you for this very thoughtful contribution; you raise important points, which will be on many peoples’ minds.

I’ll try to answer as clearly and helpfully as I can, but let me firstly note that I am not an ‘insider’, I don’t work for Ofqual, the boards, or government. I am an independent, and the documents published by Ofqual are my only source of information. But I have thought about things a bit too.

Your first point about the grades of Isaac’s friends.

What I believe will happen is that school G will recognise Isaac’s gifts, and suggest he is awarded an A* in Physics; the other Physics students are OK but not exceptional, so Laura and Mary are assessed as B, and Peter as C. They then submit those grades (the distribution being 25% A*, 50% B, 25% C), and the rank order Isaac, Laura, Mary, Peter.

The board then carries out their process of ‘statistical standardisation’. On Friday, Ofqual published the results of their recent consultation. They haven’t spelt out all the details, but they did say “…The statistical standardisation model should place more weight on historical evidence of centre performance (given the prior attainment of students) than the submitted centre assessment grades…” (page 10).

Thing were more explicit in a previous statement published on 15th May: “For AS/A levels, the standardisation will consider historical data from 2017, 2018 and 2019. For GCSEs, it will consider data from 2018 and 2019, except where there is only a single year of data from the reformed specifications.”

Furthermore, they’ve also said “the trajectory of centres’ results should not be included in the statistical standardisation process” (page 11).

My hunch is therefore that the boards will compare the submitted distribution to the corresponding three-year average for students from that school in that subject, and not more than that. But that’s a hunch, not definitive!

So let’s suppose that for school G, that average, for Physics, is 50% B, 50% C.

That’s it. Isaac and Laura will be awarded a B; Mary and Peter a C.

The rank order as submitted by the schools will be maintained (pages 11 and 12 here) – which is important, for it indicates that there’s something the boards will not do. They won’t say “Ah! We know that Laura only got a 2 in Physics GCSE. No way could she merit a B at A level. So we’ll (unilaterally) downgrade her to a D, which, according to all our statistics, is the highest grade ever achieved at A level Physics by a student who got a 2 at GCSE.” They can’t do that because that would require a change in the rank order from Isaac, Laura, Mary, Peter to Isaac, Mary, Peter, Laura. Which they have undertaken not to do.

One more point here if I may. In practice, that average, 50% B, 50% C, will be associated with some variability, which might cause those submitting centre assessment grades to seek to use some ‘wriggle room’, and to believe that it’s safe to submit grades that are above the average, but not over-the-top. That’s dangerous, as my blog describes. The only ‘wriggle room’ is what Ofqual might tolerate as regards overall subject-level grade inflation. But I’m not holding my breath on that one. The last thing Ofqual want is to be seen to have lost control.

Your second point about Isaac’s performance in other subjects. I’m sure that your hypothesis that he might be pretty bright across-the-piece makes sense to me, and your point is powerful.

Ofqual have confirmed that all the ‘statistical standardisation’ will “operate at subject level, not centre level”, so, within each school, each subject is taken on its own. So if Isaac’s school has a good historic track record of A*s in, say, Further Maths, Isaac’s A* here is safe. Where Isaac really loses out is when he is a star in an otherwise uniformly dull sky, and I really can’t see a way out of that one. What might happen, though, is that university admissions officers decide not to take a ‘legalistic’ approach this year, and – being aware of this year’s context – look beyond the published grades.

So your point about a gifted individual being unfairly dragged down by the school’s weak past record is certainly valid. It can happen and it probably will. But I sincerely hope in very few cases, and I also hope that university admissions officers are alert to this possibility, and that the school will make a big fuss on that student’s behalf.

But even with all this, I still believe that there will be less unfairness this year than in previous years, primarily because of the (in my view) better reliability of a teacher’s rank order as compared to the exam-rank-order-lottery, as discussed in the blog.

So let me finish with this thought. Consider candidates who sat A level in 2019.

Chris did Maths, Further Maths and Physics. Ali did English Language, English Literature and History.

What is the probability that both Chris and Ali were awarded the grades (of all flavours) that they truly merited?

Using the grade reliability data published in November 2018 in Ofqual’s Marking consistency metrics – An update, my calculation gives these results:

Chris: 0.91 x 0.91 x 0.88 = 81%

Ali: 0.61 x 0.58 x 0.56 = 20%.

And the probabilities that all three awarded grades are wrong are:

Chris: 0.09 x 0.09 x 0.12 = about 0.02 % (say, 2 in 10,000)

Ali: 0.39 x 0.42 x 0.44 = about 7% (that’s 700 in 10,000)

As a by-the-by, the cohort for 2019 A level English Language (in England) was 14,144; English Literature, 40,824; History, 51,438. It is by no means improbable that 10,000 of those did all three. Of whom about 700 were awarded three wrong grades. And there could be a gender bias as regards subject combinations too.

This unfairness has been hidden for years. And aggravated by the fact that since 2016, the ‘victims’ have had no grounds for appeal.

So, I hope that’s helpful. Thanks again, and if you have any further points, please do post another ‘comment’, or do get in touch with me directly at [email protected].

Reply

Victoria says:

A very interesting article and comments which raise many valid points. I am interested in your views on the consultation outcome which stated that Ofqual were still looking at how the statistical analysis could be sensitive to small centres and cohorts. How do you think this might apply to Isaac and his friends? Is there more hope for him to receive the results he deserves in this scenario?

Reply

Michael Bell says:

Hi Janet

I am in the same position as you are i.e. the parent of a Year 13 outlier, with my daughter’s predicted A level grades of A*/A/A in a school that hasn’t managed above a C in the subjects she’s taking (she achieved 9s at GSCE so we have objective evidence she is a high achiever).

Like you I have huge fears for 13 August, and to me, regardless of the points Dennis makes, it still seems inherently wrong that my child will suffer simply because she happens to attend a school that has poor historic performance. Someone else has posted here “Penalisation of schools/centres with poor track records is therefore baked into the method” – this is undoubtedly true and must surely be challenged? A judicial review has been mentioned as well and I wonder whether this is the only way we can ensure our children receive the results they deserve.

There must surely be other parents/students out there in the same position and we should be looking to join together to take action on this.

Reply

Dennis Sherwood says:

Hi Victoria

Yes, small cohorts present particular, and important, problems. As I mentioned in my response to Janet, I don’t “know” the answer, but I think I can offer some thoughts.

The extreme case is the historic cohort of zero. At my school, for the first time, I have a student in, say, A level Arabic; the student has been taught by a new teacher to my school, a Syrian refugee whose day-job is to teach maths, but who has been kind enough to teach this student too. Though not a native speaker, the student is certainly diligent, but the teacher, being new, has no basis to judge the standard. So we submit an A*.

The board has no relevant history against which to judge this submission, although they do have information on previous whole cohorts. But that information has no relevance in this specific case.

To me, the only sensible outcome here is for the board to accept the submission and award the A*. Arabic is a small-cohort subject overall, and that A* will have little effect on the whole cohort distribution; even if it does, no politician is going to kick up a fuss about grade inflation in Arabic – and it’s the political fuss that Ofqual wishes to avoid.

That, I think, is an easy case; much tougher is a school which, for several years, has had a small cohort – say 10 or fewer – in a mainstream subject such as, say, Physics.

I’ve done lots of simulations of small cohorts, and the ‘take home’ message is that the statistics are, in general, all-over-the-place, in that (with reference to the table in the blog) the range for any grade is often quite wide. So if Isaac’s school has had 1 grade A* in the last three years, there is at least a precedent, even though 1 A* per year is well below the average of 0.33. The worst situation for Isaac is the one I portrayed: small historic annual cohorts and no grade higher than a B.

But even so, the school could still submit an A* for Isaac, and have that grade confirmed, for the boards know that constraining submissions to the grade average (in the table, 10) has increasingly less statistical validity the smaller the cohort. And I am confident that they wish to be as fair as possible to everyone, whilst still under orders from Ofqual to stop grade inflation.

So, if I were the board, I would adopt a rule along the lines of “If the aggregate grade distribution for this subject across all schools has jeopardised grade inflation causing schools’ submitted grades to be over-ruled, start with the schools with the largest cohorts, keep going with progressively smaller cohorts until everything works, and then stop”.

That ensures “no grade inflation”, but puts small cohorts at the end of the process, with the possibility that the process never reaches them. To me that makes sense – it recognises that the statistics work better for large cohorts than small ones, and is pragmatic: the process has to start somewhere, so that gives a rule for where to start. In the example in my blog, this did not apply: there were only six schools, with identical cohorts. So imagine other columns for schools with cohorts of 100 each; schools A to F could be left alone if schools J and K had gone over the top. So I think my “play safe, not games” message is still valid, and, I trust, sensible.

But let me stress once again that I don’t know, and what actually happens might be different…

Reply

Dennis Sherwood says:

Michael, Janet – yes, it is wrong.

Ofqual’s original announcement of 20th March (https://www.gov.uk/government/news/further-details-on-exams-and-grades-announced) stated that teachers will be invited to submit grades, but did not mention rank orders; it also left the door open for some form of dialogue between boards and schools to enable schools to justify outliers.

That door closed with Ofqual’s consultation document, published on 15th May (https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/879627/Exceptional_arrangements_for_exam_grading_and_assessment_in_2020.pdf), which introduced rank orders; the door was firmly bolted when the results of the consultation were announced on 22nd May (https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/887048/Summer_2020_Awarding_GCSEs_A_levels_-_Consultation_decisions_22MAY2020.pdf).

Second-guessing all this, I can only conclude that Ofqual feared that too many schools would seek to ‘game’ the process, submitting over-the-top grades, blowing grade inflation sky high. I also conclude that they decided it would be at best time consuming and at worst impossible to distinguish between the chancers and the valid outliers. The upshot of all that, most unfortunately (and I really do mean that), is that the “Isaacs” will be damaged. And my understanding of the process for appeals leads me to believe that the only grounds for appeal are very narrow and technical, and would not help “Isaac”.

But I still believe that teacher rank orders are much more reliable than the exam lottery, even if the grades determined by those rank orders are constrained by history. And it is my fervent hope that this year’s process will be seen to be a success, and does indeed open the door to the design and implementation of a future assessment process in which all the candidates, including “Isaac”, are given awards that are both fair and reliable.

In case it might be of interest, may I draw your attention to a consultation on “The Impact of Covid-19 on education and children’s services”, currently being undertaken by the Parliamentary Committee on Education. This specifically includes “The effect of cancelling formal exams, including the fairness of qualifications awarded and pupils’ progression to the next stage of education or employment”.

This consultation is currently accepting evidence – https://committees.parliament.uk/work/202/the-impact-of-covid19-on-education-and-childrens-services/.

If as many people as possible in your position might be willing to make a submission…

Reply

Michael Bell says:

Dennis,

Appreciate you taking the time to provide the link to the current consultation, thanks.

Michael

Reply

Janet Hunter says:

Hello Dennis,

I just wanted to say thanks for your reply. I did have another question about small cohorts, which is covered by your answer to Victoria’s post. In my son’s case, we perhaps don’t need to be too concerned as it is a new school with only one year’s worth of A Level results. For two of his subjects, there are no historical results and for the other two, the previous year’s cohorts were 3-5 students. There are GCSE results of course, but these should show an outlier in 2018!

Michael, I agree, it is a huge concern. I agree with Dennis’s point that universities will no doubt give a bit more flexibility this year, so in the longer term grades below what you know a pupil would have achieved (and with plenty of evidence to support this which won’t have been considered) perhaps won’t matter too much, but the sense of injustice experienced on the day of results itself might be quite damaging. I would certainly want to take some sort of action in that event.

Reply

Dennis Sherwood says:

hi everyone – super, my pleasure, thank you!

Reply

E. Richardson says:

My daughter is a Cambridge offer holder due to study medicine after shadowing GPs ,hospital doctors and surgeons, personnal statement, very high GCSE results, taking interviews and scoring highly in the BMAT admissions test. She requires a minimum A*A*A to complete her offer and is expected to achieve A*A*A*A* , after achieving A*A*A*A* in her mocks and all other assessments. Unfortunately the school is not high achieving. Cambridge has already stated that if the offer is not achieved she may take the Autumn exams but not be able to start the course until 2021. Could she be forced to take a year out delaying her 6 year medical course through no fault of her own due to standardisation and students results from years ago? There are going to be many thousands of high achieving students requiring the grades they deserve to progress but not in high achieving schools.

Reply

Dennis Sherwood says:

Thank you. Yes, a policy of strict adherence to a school’s past is particularly damaging to talented students at a school with a recent history of relatively poor performance. And visibly so.

Across the country, the cohorts for your daughter’s subjects will be large, and if her school’s cohort is small, it may be that she might maintain her recommended A*s as a result of being “protected” by schools with larger cohorts, as I outline in my reply to Victoria.

Also, it is (I think) technically possible for a board to use an algorithm something like “Before the statistical standardisation model over-rules a centre assessment grade which would result in a down-grade, and working from top to bottom according to the submitted rank order, check (1) each student’s GCSE performance (2) the statistical probability that a high-performing GCSE student will achieve the centre assessment grade as submitted and (3) if that probability is equal to, or greater than, a threshold of [x] %, confirm the centre assessment grade; if not, over-rule and down-grade”. This preserves the rank order, and would be fair to your daughter, but can work only if there is reliable data relating to, for example, GCSE results or KS2 – mock results cannot be used, for the board does not know them. But if this data is not available – for example, for a student joining the school’s Sixth Form from overseas – even that doesn’t work.

Ofqual and the boards, I am sure, recognise this issue, but I don’t know the details of either their policies or their algorithms. The SQA has explicitly decided not to disclose them (https://www.sqa.org.uk/sqa/94257.html); in the report of Ofqual’s consultation, we read “The statistical standardisation model should place more weight on historical evidence of centre performance (given the prior attainment of students) than the submitted centre assessment grades where that will increase the likelihood of students getting the grades that they would most likely have achieved had they been able to complete their assessments in summer 2020.” (https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/887048/Summer_2020_Awarding_GCSEs_A_levels_-_Consultation_decisions_22MAY2020.pdf, page 10), which says, obliquely, that “history wins”. And page 11 says “we have decided to adopt our proposal that the trajectory of centres’ results should not be included in the statistical standardisation process”, which cuts both ways: a school whose performance is declining will not be “penalised”, but by the same token, a school whose performance is improving will not be recognised.

I certainly don’t want to “teach grandmothers… (!)”, so please forgive me if I am doing so; but if I were in a similar position, I would seek to get the key players to state, explicitly in writing, their policies for how they are planning to deal with this known, and anticipated-in-advance, situation of the talented student at a school with a weak track record. The college to which your daughter has applied, Ofqual, and your exam board all know this will happen, and (we would all hope) will have a corresponding policy already in place to deal with it fairly.

It is possible that the College policy is “that’s tough, we’re just going by the grade as awarded”; the board “our policy is to use the average of the last three years, and that’s that”. I don’t know. But my personal opinion is that it is fair for you, and indeed anyone else, to ask those questions, for they are questions of policy and not special-pleading for a particular case. Also, by asking those questions now, before the process has started (noting that grades have to be submitted to the boards between Monday 5 June and Friday 12 June), you are putting some valuable stakes in the ground.

It may be that the answers you receive are vague of evasive – but that too could be valuable evidence to store away.

I mentioned the Education Select Committee consultation in my previous reply – you can submit evidence to that on https://committees.parliament.uk/work/202/the-impact-of-covid19-on-education-and-childrens-services/.

The Committee is sitting now, and you have the right to make direct contact, especially if your local MP happens to be a member – https://committees.parliament.uk/committee/203/education-committee/membership/. And given that centre assessment grades are a current and important issue, they might take notice right away: for example, TES reported their session just a couple of days ago with the Schools Minister, Nick Gibb, in which he talked of the “big burden” this process is placing on teachers (https://www.tes.com/news/coronavirus-gibb-calculating-grades-big-burden-teachers).

I do hope everything works out for your daughter in the end!

Reply

Michael Bell says:

E. Richardson – I am really sorry to hear that Cambridge are being so inflexible given the circumstances. One would have hoped they would look at this in the round and make a judgement that your daughter is clearly good enough to take up her offered place. How hugely unjust.

We could all sit and hope that this standardisation process does not in the end adversely affect our children’s chances, but given my research and the content of this blog post I do not feel particularly optimistic that’s going to be the case. On that basis, I have spoken to a couple of solicitors operating in the education sector and they are talking to barristers interested in this topic. In due course I will be setting up a campaign on Crowdjustice to try and challenge Ofqual’s proposed methodology and lack of appeals process for the individual and move towards a judicial review. The more people who band together and shout loudly about this the better the chance we might get heard.

Reply

Huy Duong says:

Hi Dennis and everyone,

Thank you for the very interesting article and discussion. What Dennis said about cohorts of less than 10 students is spot-on.

My son’s school has 7 students in this year’s Further Maths cohort (another school I know has 3). Last year, the school had 5 students taking Further Maths, of whom two got A*, two got A and one got E. A few observations:

First, as many people have mentioned, with such small cohorts, the percentage getting each grade will fluctuate a lot from year to year. There is no statistical magic that can give us a worthwhile prediction of the grade distribution.

Second, the fact that two got A*, two got A and one got E suggests that there is more dependence on the individuals’ abilities than the school’s teaching, much less on previous years’ teaching, so basing the statistical model on the school’s past performance might not make sense in this case.

Another point, well, one that’s related to the point above, is in previous years the school had 1 or 2 % of each years getting Oxbridge offers for all subjects, while this year about 7% got Oxbridge offers for STEM subjects alone. This suggests that this year the might me more student capable of getting A* in STEM subjects than previous years, and trying to constraint the number of A* to those in previous years might not be fair, and could be disastrous for the Oxbridge offer holders.

It is true that exams and exam marking are not perfect. However, every student in an outlier cohort has the option to protect themselves against these traditional risks by doing the preparation to give themselves as large a safety margin as possible. With this year’s arrangement, it is impossible for every student in an outlier cohort to protect themselves against the new risks – some might be knocked out regardless of how good they are, so that past statistics are satisfied.

I wonder if there is a legal problem when students sign up and spend 18 months working towards grades that are to be awarded in one way, then with two to three months to go, the goal posts are moved. Some students have accepted and rejected university offers for 2020 entry based on which grades they are likely to get in the exams, and now the grades will be awarded in a completely different way, potentially causing them to miss their offers.

Reply

dennis says:

i work with Quant Model, do not make it difficult:

1 completely lack of trust of teachers judgment

2 Discrimination by generations

3 Discrimination by Schools

no pupils appeal but only by school is ridiculous and antidemocratic

expected grades by teachers that have followed the students every day would have been more fair

no comment on the incapacity to proceed with remote tests (and in-site for the few not having broadband)

Reply

dennis says:

Hi Huy,

I Completely agree with you.

Expected grades were predicted pre Covid with accuracy because schools don not want to inflate and miss end results.

So the real offers received from University should have been confirmed with the approval of the teachers.

Again from a legal point of view, how can a single school appeal a supervisory board (OfQual)? No schools will want to do that.

Please let me know if you will have a group of parents that want to proceed with a class action. It is a clear discrimination by school and generation in my view.

KR

Reply

E. Richardson says:

Thank you Dennis and Micheal for your detailed and interesting replies. These are worrying times and my daughter is pushing on with revision regardless incase the standardisation process ruins her future plans.

I do wish to mention that Cambridge has been nothing but highly impressive in the way it has handled its admissions process. It’s holistic approach has been incredibly thorough, with admissions testing and interviews as well as past exam performance and work experience being taken in to account. I only hope they see their way to look beyond any anomalies in performance that may be thrown up through standardisation. I appreciate that my worries of this worse case scenario may end up being unwarranted and really do hope that others are not disadvantaged either …..but worry I will.

Reply

Dennis Sherwood says:

Ah! Thank you, Rob Cuthbert, for spotting the errors I made in my reply to Janet in connection with ‘Chris’ and ‘Ali’! Much appreciated! My mistake, and my apologies accordingly.

In my reply to Janet, I said this:

Probability that all three grades are right:

Chris: 0.91 x 0.91 x 0.88 = 81%

Ali: 0.61 x 0.58 x 0.56 = 20%.

And the probabilities that all three awarded grades are wrong are:

Chris: 0.09 x 0.09 x 0.12 = about 0.02 % (say, 2 in 10,000)

Ali: 0.39 x 0.42 x 0.44 = about 7% (that’s 700 in 10,000)

I’ve now double-checked, and believe that all the numbers for ‘Ali’ are all correct.

The error concerns the numbers for the reliabilities of maths and further maths grades for ‘Chris’.

According to Figure 12 in Ofqual’s Marking Consistency Metrics – An update, the reliability of Maths and Further Maths is 96%, and of Physics, 88%.

So the probability that Chris receives three correct grades is

0.96 x 0.96 x 0.88 = 81%

and of three wrong grades

0.04 x 0.04 x 0.12 = 0.02%

When I was looking at my spreadsheet and reading numbers across to write my reply to Janet, I typed ‘0.91’ instead of the correct number ‘0.96’. I then compounded the error by not looking at my spreadsheet for the ‘all wrong’ numbers, but just looked at the numbers I had just typed to determine 1 – 0.91 = 0.09.

So, overall, my reply to Janet should have said:

Probability that all three grades are right:

Chris: 0.96 x 0.96 x 0.88 = 81%

Ali: 0.61 x 0.58 x 0.56 = 20%.

And the probabilities that all three awarded grades are wrong are:

Chris: 0.04 x 0.04 x 0.12 = about 0.02 % (say, 2 in 10,000)

Ali: 0.39 x 0.42 x 0.44 = about 7% (that’s 700 in 10,000)

Wow!

So apologies again, and thank you, Rob!

Reply

Michael Bell says:

Further to comments made earlier in this thread, I thought I’d post an update for those of you following who have an interest.

I have now submitted evidence to the Commons Education Select Committee as Dennis advised. I wait to see whether this is accepted.

I have also contacted Ofqual, JCQ, and the exam boards Pearson Edexcel, AQA and OCR to ask them to state their policy for dealing with the issue of talented students at poorly performing schools and await their responses. If I receive anything I will post an update here.

Lastly, and perhaps most importantly, I have set up a campaign on Crowdjustice to challenge Ofqual. The detail can be found here – https://www.crowdjustice.com/case/challenge-ofqual/

I urge those of you who have children in this situation to support the campaign and also pass this link on to your social media network. The more widely this is shared and the louder the voices that are heard challenging the current plans, then the more chance we have of being listened to and hopefully getting changes made that lead to our children being treated fairly and not discriminated against.

Reply

Dennis Sherwood says:

Hi Michael

I’ve just read your Crowdjustice page. Sparklingly clear, powerfully written. I do hope you will receive all the support you are seeking.

Reply

Michael Bell says:

Thank you Dennis, your posts and suggestions have been very useful.

With regards to the campaign, it may well be that no-one really gets the message until the unfortunate students affected receive their results. Let’s hope not, but we’ll see.

Reply

Angel says:

Hello. Is there any chance you could answer this question:

After completing grading and ranking, we have a peak in the 7 to 9 level-range.

We currently have 55% on 7 to 9 vs 37% last year.

Students awarded levels 6 downwards are more in line with last year’s results percentages.

Will the 55% peak in the 7 to 9 levels affect/impact students in the 6 to 1 levels who are more in line with previous year results?

Reply

Dennis Sherwood says:

Hi Angel – apologies for not replying sooner.

As I’ve mentioned before, I have no information other than my understanding of the published documents, so I don’t “know” the answer. Perhaps your board will give you a definitive ruling – ideally before you have to make your submission!

As far as I know, the details of exactly how ‘statistical moderation’ will work have not been published, nor the policy on how much, if any, grade inflation will be tolerated. It is therefore possible that all your centre assessed grades might be confirmed, even though your 7 to 9 numbers are higher than your history.

But if not, it all depends on the rank order, and how much ‘space’ there is within each grade that can ‘absorb’ a down-grade from the next grade up, but without ‘pushing’ any candidate at the bottom of the grade into the next grade down.

As an example, suppose that in the past, you had only 2 candidates, [A] awarded grade 7, and [B] grade 5. This year you also have 2 candidates: [X] is very able, and you submit grade 9; [Y], grade [5]. Those are the grades you submit, with rank order [X] above [Y].

The board identifies [X] as an outlier, and downgrades to 7; [Y] is confirmed as 5.

That works because there is ‘space’ for [Y] to be downgraded leaving [X]’s grade alone. But with more candidates, there will be a possible ‘domino’ effect, as everyone is shuffled downwards.

Throughout all this, the rank order is preserved. So it’s quite easy to simulate. If you haven’t already done this, and if there is still time, please contact me for I have some software that can test this out very quickly.

Hope that’s helpful.

Reply

Angel says:

Dear Mr Sherwood,

Many thanks indeed for your response to my question.

I would like to contact you to use the software.

I had a meeting with my Head teacher and Deputy Head teacher. They are interested in the formula that you suggested. We are under extreme time constraints because submission is just 2/3 days away. Would there be any possibility to do it today. By all means, I fully respect your time and appreciate you have priorities. With full appreciation for your help,

Angel Mina

Reply

Dennis Sherwood says:

Hi Angel – yes: please contact me on [email protected]

Cheers Dennis

Reply

Maria H says:

Hi Dennis

I have been following this post with interest. Please could you advise your opinion on the following.

1. With A levels if the Prior GCSEs of the subject cohort are taken into account, this could lead to much higher grades than usual if the cohort are stronger than before?

2. If small cohorts are going to have special

Consideration Re the teacher assessed grade weighting, what is considered to be small less than 10, 20?

Thanks ,

Reply

Dennis Sherwood says:

hi Maria – thank you in general, and in particular to asking for “my opinion”, for, as an ‘outsider’ with access only to published information, that is all I can do.

q1. Yes.

It is certainly my opinion that if a previously strong school just happens to have a weaker cohort this year, this year’s students will benefit from the prowess of their predecessors.

My primary source for this opinion is a statement on page 9 of Ofqual’s ‘Guidance notes’, dated June 2020, so it’s right up-to-date (https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/890811/Summer_2020_grades_for_GCSE_AS_A_level_guidance_for_teachers_students_parents_09062020.pdf):

“However, if grading judgements in a subject in some schools and colleges appear to be more severe or generous than others, exam boards will adjust the grades of some or all of those students upwards or downwards accordingly.”

This is under the heading “How will centre assessment grades be standardised?”, which is a description of how “statistical standardisation” will work.

That statement explicitly mentions “upwards” as well as “downwards”. The BIG QUESTION is “on what basis will these adjustments be made?”, to which the answer has to be “history”, as explicitly stated in this blog published by Ofqual on 15 May:(https://ofqual.blog.gov.uk/2020/05/15/making-grades-as-fair-as-they-can-be-advice-for-schools-and-colleges/)

“Within each subject, it will consider each centre individually, using the centre’s historical results and the prior attainment of the current students, to judge whether its centre assessment grades are more generous or severe than predicted. For AS/A levels, the standardisation will consider historical data from 2017, 2018 and 2019. For GCSEs, it will consider data from 2018 and 2019, except where there is only a single year of data from the reformed specifications.”

The “Isaac” story focuses on the bright student chained to a weak past, and the fact that Ofqual have now ruled out any dialogue between an exam board and a centre, so preventing a school from being able to justify an up-side outlier. And given that the board has no way of distinguishing between a truly talented student and a school-that-is-trying-to-take-the-board-for-a-ride by submitting over-the-top grades, the board is likely, I believe, to assume the worst.

If the board is to apply the same rule to every school (as I believe it must, but that might make an ‘interesting’ legal case sometime in the future), then the board must upgrade too, so as to ‘protect students from being graded by ol’ hard-o-nails’. So, yes.

Q2. Don’t know.

But it’s a good question. A couple of thoughts. The only reference in the June document (https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/890811/Summer_2020_grades_for_GCSE_AS_A_level_guidance_for_teachers_students_parents_09062020.pdf ) to ‘small cohorts’ is on page 10:

“For small centres (which will include many Pupil

Referral Units and Special Schools) and subjects with a small entry the balance will

be different than for large centres and subjects. It is important that the

standardisation model is sensitive to the reliability in statistical predictions for small

centres.”

But that’s terribly vague – it doesn’t answer the question “how small is small?”, nor does it give any insight into what “…the balance will be different…” means for real.

As noted in my reply to E. Richardson, there is a possibility that the submission from a centre for a small local cohort within a large national cohort might get overlooked; but if there are a large number of small cohorts, all of which have ‘optimistic’ submissions, that could threaten grade inflation in that subject, as the example in the table illustrates.

I consider it to be a great pity that Ofqual, and the SQA, have been so secretive about the rules. Presumably they know what the rules are, for they have to use them. So why not make them explicit so that everyone knows what they are and why they are like that? Each centre can then have knowledge, in advance, of whether their submitted grades are likely to confirmed, or of the risks they are taking by not complying. To me that makes sense…

Reply

MariaH says:

Hi Dennis

Thank you for your answers. I think I asked question number 1 incorrectly.

As GCSE’s are taken into account across the cohort subject for those taking A’ levels.

If the GCSE’s are stronger than previous years this should allow for more top grades in A’levels.

So if Isaac, for example, achieved good GCSE grades as well as the other members of his class then his Physics grade may

be allowed by the exam board?

Reply

Tania says:

Hi Dennis,

Thanks, interesting and insightful as ever!

Having been through “reviews of marking” and a full appeal on an A level last year (which was successful even though it was Chemistry which should be easy to mark correctly!), I agree with you that teacher assessment, if done equally across all schools, is the fairest way of students getting the right results and should be a more accurate reflection of a student than exams. Obviously it would be better if students knew that was what would happen for them, but we are living in strange times.

I see you noted in your article that boards will not look at a centre overall, but it seems to me that the fairest way to weed out gaming/generous/less generous approaches by schools would be to look at whether the overall submission from the school appeared to be reasonable (within context of previous centre results and cohort tests) before drilling down into the detail by subject, particularly for A levels where many schools will have cohorts of fewer than 10 students so a statistical approach by subject will not work. It is disappointing if they have closed the door on that “reality check” at that level.

I am not so worried about GCSEs, they are not as important, the universities will be able to put them in context and most schools will have enough data for the statistical approach to work for most students, although my son’s strategy to save the real burst of work to the end has not worked out well this year! I feel for parents of anxious Year 13s in the most mixed ability schools.

Reply

Tania says:

Michael Bell,

I applaud your effort to address the situation of high achieving students in low achieving schools, but I think you would would be more likely to help your daughter get the right outcome by helping her to contact her university to explain her concerns and situation. I think it is quite possible that they would make her offer unconditional or advise her how to approach the situation on results day. As Dennis said above, setting a stake in the ground at this point is helpful and may mean that when the Admissions Tutor is going through offers and results in the week between universities getting results and students getting them, they will be fully informed.

Obviously that is not an ideal situation and, if the system does not give your daughter the results she deserves, even if the university takes a pragmatic approach she will feel rightly aggrieved that her A level results do not reflect her abilities, but her degree will be more important in the end.

Good luck!

Reply

Michael Bell says:

Hi Tania

Thanks for your comments. We have indeed contacted my daughter’s university regarding this issue and whilst they will not give an unconditional offer they have said they will take these particular circumstances (and the way this statistical standardisation process appears to be going to work) into account and reading between the lines I hope that she should be OK regardless of the grades she’s eventually awarded. Although there are no guarantees and as you’ll have seen earlier in this thread, at least one university seems to be unwilling to take this matter (talented outlier student at poor/mediocre school) into account and make any allowances for grades being potentially adjusted downwards.

Nevertheless, the complete lack of clarity on how this model will actually work and the outright evasive way in which Ofqual seem to be approaching this (to the point where schools know no more about its operation than we do as parents or students even though they have to submit their marks by today) is to me fundamentally wrong and still needs to be challenged, particularly as there is to be no right of appeal regarding either the operation or the outcomes from the statistical standardisation process at all, either for student or exam centre.

Reply

Dennis Sherwood says:

Hi again Maria

Thanks for the clarification.

Mmm. I really don’t know. Given that the details of the rules have not been published, it’s possible that this could happen. But I’d be surprised.

Firstly, in the latest version of Ofqual’s ‘Guidance for teachers’, published earlier today, page 10 states “the trajectory of schools and college results will not be taken into account ” (https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/890811/Summer_2020_grades_for_GCSE_AS_A_level_guidance_for_teachers_students_parents_09062020.pdf).

I interpret that as meaning, for any subject at any level, any historic trends are disregarded.

I haven’t studied the statistics, so I don’t know if, say, the 2019 results in A level English correlated better with the A level results of 2018, 2017 and 2016, than with the GCSE results of 2017, this being the cohort, some of whom are taking A level English in 2019 – or indeed the subset of the GCSE candidates of 2017 that actually are sitting A level in 2019.

If the GCSE – A level correlation is known to be better (and presumably the boards and Ofqual know this), then, for 2020, why bother with the A level results of 2019, 2018 and 2017? And if the prior A level correlation is known to be better, why bother with GCSE?

And if they are using both, in some form of algorithm, why not tell us? What’s to hide?

One thing that I don’t think can happen this year, as I’ve mentioned elsewhere, is something like “candidate [x] got [this grade] at GCSE, and our models tell us that the likelihood that the same person will get [that grade] at A level is low – so we’ll overrule [that grade] and award [another grade altogether]”.

They can’t do that in general, for it will change the rank order, which they have committed to keep intact. So if they can’t do that in general, I would be surprised if they were to do that in particular cases.

Lastly, comparisons between GCSEs and A levels might be ‘interesting’ but I suspect are fraught with difficulty if used ‘for real’. The cohorts are different; the teachers are different; the students are two years older – so many things will have changed. And add to all that the intrinsic unreliability of both the GCSE and A level grades in making those comparisons (https://www.hepi.ac.uk/2019/02/25/1-school-exam-grade-in-4-is-wrong-thats-the-good-news/)

But I am confused by the statement, of page 9 of the ‘Guidance for teachers’ that says “the standardisation model will draw on the following sources of evidence: historical outcomes for each centre; the prior attainment (Key Stage 2 or GCSE) of this year’s students and those in previous years within each centre”.

What does “draw on” mean? And what it the relevance of Key Stage 2 to A level? I really don’t know.

So, overall, my hunch is that they will keep things simple, and as robust as possible, comparing this year’s cohort to the three-year relevant average for A level, and to whatever other average works for GCSE graded 9, 8, 7….

But I might be wrong.

Which is, I think, the real problem. I might be wrong because I don’t know. And I don’t know because the rules have not been made clear. Why not?

Reply

Tania says:

I think I read that the universities were told not to make any more unconditional offers. I know it is tough, but it sounds like it really should be OK for your daughter, but some will lose out.

My understanding of what Dennis is saying is that the whole exam system is much more flawed than people realise. I should not have had to get 6 papers reviewed and then fund an appeal to get the right grade for my son (total cost was around £500!). As the article says, so many people just live with the wrong grades in a normal year because it is difficult and expensive to challenge them. The exam boards are businesses but are responsible for policing themselves to a large extent.

I don’t think Ofqual are being deliberately evasive, it is a difficult process to develop and they are carrying out consultations as they go along, although it is not clear that they take any notice of the responses. The civil service/quangos are not known for their speed of response!

Reply

Dennis Sherwood says:

Hi Tania

Is Ofqual deliberately evasive? Well…

On 11th August 2019, Ofqual posted an announcement on their website which included this statement: “…more than one grade could well be a legitimate reflection of a student’s performance…” (https://www.gov.uk/government/news/response-to-sunday-times-story-about-a-level-grades).

To my knowledge, that is the first time Ofqual have explicitly admitted that grades are unreliable.

I then wrote this blog (thank you, HEPI) https://www.hepi.ac.uk/2019/08/15/dear-ofqual-%EF%BB%BF/. The questions have remained unanswered to this day.

Another “interesting” document is this one: https://research.aqa.org.uk/research-library/review-literature-marking-reliability (I think there’s something wrong with the link on that page, but contact me by email and I can send you a pdf).

Page 68 says

“The literature reviewed has made clear the inherent unreliability associated with assessment in general, and associated with marking in particular.”

And page 70

“However, to not routinely report the levels of unreliability associated with examinations leaves awarding bodies open to suspicion and criticism. For example, Satterly (1994) suggests that the dependability of scores and grades in many external forms of assessment will continue to be unknown to users and candidates because reporting low reliabilities and large margins of error attached to marks or grades would be a source of embarrassment to awarding bodies. Indeed it is unlikely that an awarding body would unilaterally begin reporting reliability estimates or that any individual awarding body would be willing to accept the burden of educating test users in the meanings of those reliability estimates.”

Indeed so. No one likes to wash their dirty linen in public. So maybe “reporting reliability estimates” is something a regulator might do. Now there’s an idea.

This document is doubly “interesting”.

Firstly, it was written in 2005. That’s a long time ago, and nothing has happened in the 15 years since then to fix the problem.

Secondly, the lead author is Dr Michelle Meadows, who in 2005 worked at AQA. Today, Dr Meadows is the Director For Strategy, Risk and Research at Ofqual.

And a week ago, on 5th June, Dr Meadows appeared before the Education Select Committee giving evidence in connection with “cancelled exams and the calculated grades system” (https://www.parliament.uk/business/committees/committees-a-z/commons-select/education-committee/news-parliament-2017/impact-of-covid-19-school-closures-evidence-19-21/).

I wonder what she said.

Those are just two examples. I have many more…

Reply

William says:

I am a year 13 student who attends a non-selective state school. As a potential “Isaac”, I am very concerned that my grades could be downwardly adjusted so that they are in-line with the achievement of previous cohorts at my school. Like other students mentioned in the accounts above, I have achieved very high attainment in my mocks, I also have straight As (the top grade) at AS level and straight 9s at GCSE in my A level subjects.

As others have said, the standardisation process which will be used by Ofqual will fail to account for students who are outliers with respect to current and previous cohorts at their school. This means that a student of identical academic ability to me, but who attends a selective grammar school, will likely end up with higher grades. I don’t know any way else to put it: it is exceptionally unjust and unfair for this group of students, it is a perfect recipe for widening the attainment gap and it is wrong.

I don’t understand why Ofqual is not willing to use the readily available and externally moderated information (i.e. GCSEs and AS levels (where available)) to triangulate the teacher assessed grades, instead of simply assuming that they are “over-inflated”. If my teacher assessed grades are in-line with the expected progression given my prior attainment, why should my grades be downwardly adjusted? This is worsened further by the fact my school will not be able to appeal to Ofqual on my behalf- for instance- by presenting mock exam results, evidence of consistent high attainment in class tests or my prior attainment data.

I understand that Ofqual is suggesting that if I am in this position, I am to take exams in Autumn. However, in Ofqual’s own words “the great majority of [students] will probably not take exams in the Autumn series”. Not only this but the cohort who takes part in the Autumn exam series will certainly not be representative of an average cohort. I fear that the average academic ability of the students who take part in this exam series will be higher than would be expected in a normal exam season. Thus, it could be relatively harder to achieve top grades in this exam series than in previous years- a double injustice! It is also unclear whether all universities will hold offers open for those who chose to take the exams.

Dennis, in one of your above comments you suggest that universities should be flexible. However, my fear is that the confidentiality clauses around the teacher assessed grades will mean that I won’t be able to effectively appeal to my universities since I will not be able to establish that I have been unfairly penalised by Ofqual’s standardisation model.

I want to know what- if anything- Ofqual has done to inform universities that high achieving students in non-selective state schools are likely to be disproportionately and unfairly affected by the standardisation model they have designed. The message being falsely portrayed is that Ofqual’s system is “fair” and “supporting student progression”- this is clearly not the case for this group of students.

I am in strong support Michael Bell’s proposal, since I believe that the standardisation model Ofqual has designed is neither reasonable nor fit for its stated purpose!

Reply

Dennis Sherwood says:

Hi William

Thank you for taking the time and trouble to post your important comment.

And I do hope, most sincerely, that you are not about to become a victim of this year’s process.

But I don’t know, for Ofqual have failed to publish the details of the ‘statistical standardisation’ process. So it may be that your fears – and mine, and many other people’s too – are unfounded, and the a ‘triangulation’ process of the type you suggest will indeed be carried out. But it may not.

Ofqual, and the SQA too, have had every opportunity to make the details clear so that schools would be able to take them into consideration when determining their centre assessment grades. That did not happen, and now the deadline for submission has passed.

Even so, Ofqual could still clarify matters so as to reassure “Isaacs” that they will not be disadvantaged, which would be a most welcome relief. But the longer Ofqual remain silent, the more likely it is that I (and perhaps others) will start thinking, “Ofqual have still not disclosed the details, and so, presumably, have done this deliberately. Is that because they do not wish to give the explicit bad news “We’re sorry, Isaac, but…”, preferring to leave everything until everyone’s results are declared in August?”. Ofqual might publish the answer, whatever it is, next week. But they might not.

The Parliamentary Select Committee on Education is currently conducting an inquiry into “The impact of Covid-19 on education and children’s services”, encompassing, amongst other things, “The effect of cancelling formal exams, including the fairness of qualifications awarded and pupils’ progression to the next stage of education or employment”. It is still open for evidence, which can be submitted on-line at

https://committees.parliament.uk/work/202/the-impact-of-covid19-on-education-and-childrens-services/

Thank you once again. And I wish you every success – which you will surely achieve despite the current anxiety.

Dennis

Reply

Michael Bell says:

Hi William

I am very sorry to hear that you are in the same position as my daughter.

Just with regards to accessing your teacher assessed grades in the unfortunate event our fears are borne out and you do have your grades adjusted down by the exam board, I believe you have a legal right to make a “subject access request” after the results have been released which requires your school to give you that information within one month (I think). However, if it is the case that the school themselves have already adjusted your grades downwards before submitting it (as I understand some schools have been effectively second guessing the workings of the model and adjusting their grades before submission to ensure they are in line with their previous historic performance) then I am unsure whether you’d be given this information. You would hope that your school would be supportive of your position though and provide you with what you need.

As for your question as to whether Ofqual has done anything to inform universities that high achieving students in non-selective state schools are likely to be disproportionately and unfairly affected by the standardisation model they have designed, I don’t know for sure but I would think that’s highly unlikely, given that they haven’t admitted that to anyone that’s asked them so far, myself included. That is why I do consider them to have been evasive, as they’ve had every opportunity to address this issue and have singularly failed to do so.

I’d encourage you to submit evidence to the Commons Education Committee as Dennis has done, and to share as widely as you can the details of this issue so that more become aware of it and support a campaign to challenge the current position.

Michael

Reply

Dennis Sherwood says:

Some ‘stop press’ news this morning, 15th June.

Over the last few weeks, FFT Education DataLab have been ‘looking over’ the GCSE grades likely to be submitted by 1900 schools. They’ve just posted this blog

https://ffteducationdatalab.org.uk/2020/06/gcse-results-2020-a-look-at-the-grades-proposed-by-schools/.

As you’ll see, it looks like every GCSE subject has been over-bid, and it could well be the same for A level too.

If that is the case, then it is, I think, quite likely that the boards will intervene quite heavily…

Reply

Huy Duong says:

I have contacted Ofqual multiple times on their blog and by email to ask them about the issue of variation for small cohorts. One of their answers simply restates what we already know:

“The statistical standardisation model will consider each centre individually, using the centre’s historical results and the prior attainment of the current students, to judge whether its centre assessment grades are more generous or severe than predicted.

For AS/A levels, the standardisation model will consider historical data from 2017, 2018 and 2019. For GCSEs, it will consider data from 2018 and 2019, except where there is only a single year of data from the reformed specifications. The model will accommodate those centres for whom there is not this many years of data available. We are aware that year-on-year variation in student results is greater in small centres (including alternative provision). Any model we use will take this greater variation into account.”

So I restated what I had asked for:

“Yes, I understand that the statistical model will consider each subject for each centre and 2017, 2018 and 2019 data will be used for A levels (while 2018 and 2019 data will be used for GCSEs). I also understand that Ofqual plans for the model to allow for greater relative variations for smaller cohorts (not just smaller centres, but smaller cohorts because even a large centre might have small cohorts entered for less popular A levels).

The information I’m seeking is the details of any statistical model which can reliably predict the grade distributions for cohorts of 10, 20, 30, 40 students. From my experience of developing software to manage clinical trials, and discussion with colleagues and family members who are professional statisticians,it seems to me that statistics cannot model cohorts such as my son’s Further Maths A level class (7 students) reliably with worthwhile tolerances, so what I’m seeking is the details of how Ofqual plans to do it.”

And their reply on 27th May was

“We are still refining the detail of the statistical model and will publish more detail in due course.”

If they really think there is a statistical model with the magical power to moderate small cohorts with worthwhile tolerances, then we are led by fools and a lot of young people will suffer injustice. What I’m hoping for is they realise the fallacy and their “statistical model” will give small cohorts such wide tolerances that the effects of moderation will be small, and they just didn’t want to say it before the grade submission to prevent schools gaming the system. On the other hand, having seen the mistakes the government has been making in dealing with the crisis that brought this about, and its inability to see those mistakes, I’m not so sure.

Reply

Michael Bell says:

Huy Duong,

I’ve had the same responses from Ofqual including the “We are still refining the detail of the statistical model and will publish more detail in due course.”.

So I asked them to tell me when they WOULD be publishing the details of the models (and so would be able to answer my specific questions). You won’t be surprised to find they haven’t replied, even when chased.

Reply

Dennis Sherwood says:

Ofqual are not alone in not answering questions. Here are two as-yet-unanswered questions posed to OCR on 22 May: https://schoolsweek.co.uk/why-we-can-be-confident-about-this-summers-grades/

Reply

Huy Duong says:

Hi Dennis,

Regarding your second question at the link above, namely,

“2. If not, why are schools being asked to submit grades at all? As your piece makes clear, the board needs only the rank order, on which they can superpose whatever grade boundaries they wish. Is not asking schools to submit grades that might be over-ruled for reasons that the school can neither anticipate nor control just setting them up to fail?”

I think it makes sense for the schools so submit both the ranking and the predicted grades. With just the grades (ie, without the ranking), if it is necessary for the boards to adjust them, they won’t have any information on which students are to be adjusted. With just the ranking (ie, without the predicted grades), the role of the school’s past performance will be too dominant. With both the ranking and the predicted grades, there is more potential for accommodating both the external standardisation and what the teachers think the students should get.

Reply

Dennis Sherwood says:

Hi Huy

Thank you, and I accept both your points. And I do hope that your second point, about submitting just the rank order without the grades, is both right and what will actually happen.

My anxiety, though, concerns those words “the role of the school’s past performance will be too dominant”, for I just don’t know how “dominant”, or not, past performance will be. OCR had the chance to clarify that by posting a reply to the Schools Week article, but they chose not to. Maybe they never read the questions…

Perhaps, one day, Ofqual and the SQA might be kind enough to tell us all what the rules actually are…

Reply

Dennis Sherwood says:

Thanks to everyone who has contributed to this most stimulating conversation!

This blog was posted on 18 May; I write this note on 19 June, one week after the final date, 12 June, by which schools had to submit their centre assessment grades. If you haven’t already seen my latest blog, posted yesterday, this is the link: https://www.hepi.ac.uk/2020/06/18/have-teachers-been-set-up-to-fail/

In the new blog, I refer to some data published by FFT Education Datalab, which is a sort-of ‘exit poll’ of submitted grades (or rather ‘pre-entrance poll’): their results suggest a very high likelihood that all GCSE grades have been over-bid (they don’t have any information on A level) – see https://ffteducationdatalab.org.uk/2020/06/gcse-results-2020-a-look-at-the-grades-proposed-by-schools/

So as well as the built-in ‘Isaac’ unfairness, it looks as if a large number of schools have missed the point of the table, and all bid ’11’ rather than the average ’10’.

This is a great pity. The more so since each individual school is quite likely to have acted in its own interests, and not been unduly ‘greedy’, but to the detriment of the whole community: a true ‘tragedy of the commons’ (https://en.wikipedia.org/wiki/Tragedy_of_the_commons).

It is, however, possible for ‘tragedies of the commons’ to be avoided, but to do this requires both decisive and wise leadership, as well as willing and trusting followership. I wonder what that might imply as regards the role of bodies such as the teaching associations ASCL, NAHT, HMC and the rest, and the extent to which their respective communities are willing to listen to wise advice?

Reply

Michael Bell says:

An update on the ““We are still refining the detail of the statistical model and will publish more detail in due course” statement from Ofqual.