How to be ‘innovative’ in school exam assessment – fewer grades

This blog by Dennis Sherwood considers the latest twists in school and college pupils’ assessments for 2021.

Ofqual’s newly-appointed Chief Regulator, Simon Lebus, recently stated his commitment to ‘supporting “innovation” in assessment’. Now that that school exams have been cancelled in England, there is an opportunity for him to do just that.

The details for this year’s process remain to be confirmed, but as signalled in the exchange of letters between Gavin Williamson and Mr Lebus on 13 January 2021, and as confirmed in Ofqual’s consultation document of 15 January, a central proposal is that ‘a student’s grade in a subject will be based on their teacher’s assessment of the standard at which they are performing’. In which case, an interesting innovation would be for these teacher assessments to be structured not as 7 A level grades, 6 AS grades and 10 GCSE grades, but as four bands. In higher education, of course, this has been the case for decades. For schools, though, this idea could well be dismissed as stupid, impractical, revolutionary. But is it sensible?

The key question is this: can a teacher of, say, A level Geography distinguish between students reliably enough to assess one candidate as grade C, and another as grade B? Or for GCSE English, grade 6 and grade 5? Yes, most teachers can probably identify the true high-flyers who merit a high A* or 9, and those who unfortunately are at the opposite end of the ability spectrum. But in the middle, it is far, far harder to make these distinctions.

Perhaps the exam system can do better, for, given the much-repeated statement that ‘exams are the fairest way of assessing what a student knows, understands and can do’, then surely exams must be the gold standard, delivering finely-divided grades that are fully reliable and trustworthy.

But are they?

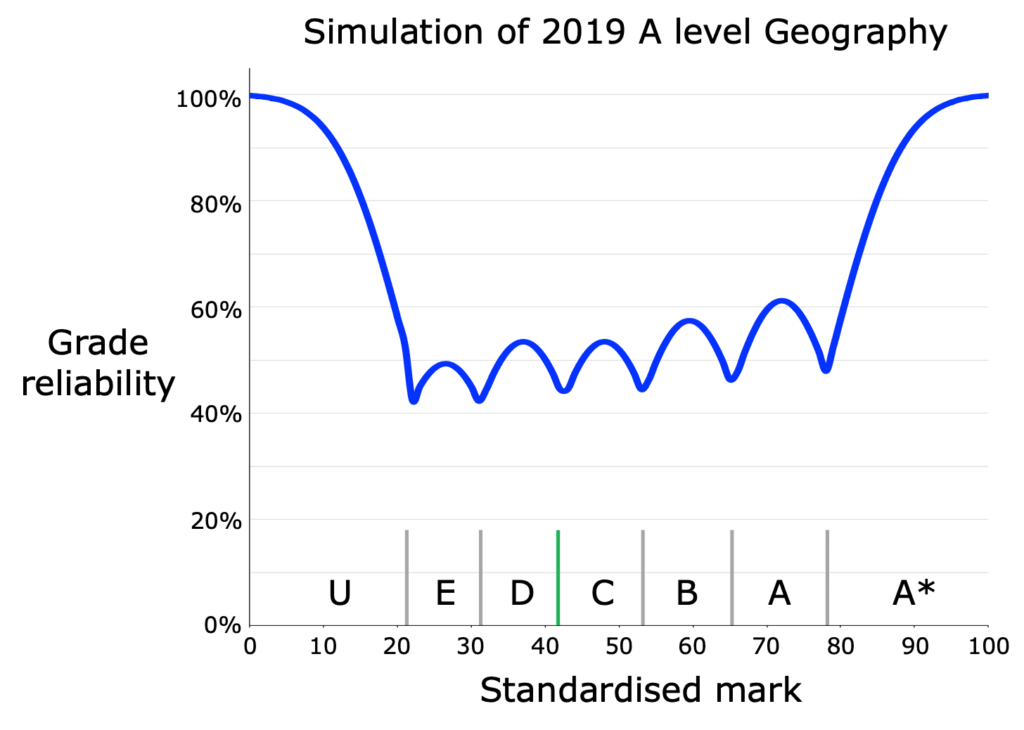

No, they are not. At the Select Committee hearing of 2 September 2020, Ofqual’s then Chief Regulator stated that exam grades are ‘reliable to one grade either way’. That’s rather vague, so to make those words real, this chart shows the results, based on Ofqual’s own research, of the author’s simulation (details of which are available on request) of the grades awarded to the 31,768 candidates who sat A level Geography in England in 2019.

The horizontal axis shows the mark given to any script, on a standardised scale from 0 to 100, and for each mark, the blue line answers the question ‘what is the probability that a script given that mark would receive the same grade, had that script been marked by another examiner?’

This question recognises that the examiner who marks any script (or the composition of the team who collectively mark a single script) is in essence determined by a lottery, and that any script might be marked by another examiner (or team). And since ‘it is possible for two examiners to give different but appropriate marks to the same answer’, it is possible that the resulting grade will be different too. The blue line is therefore a good measure of the reliability of the awarded grade.

The results might be surprising. As can be seen, very high A*s and poor Us are 100% reliable, implying that those grades would be awarded no matter who marked the script. But look at grades A, B, C, D and E, for which the grade reliability bounces around between about 60% and 40%. That means that, in 2019, the nearly 30,000 students ‘awarded’ grades A to E in A level Geography (that’s more than 90% of the total cohort) each had about a 50% chance of being awarded a different grade had their scripts been marked by another examiner. What does that say about the reliability and trustworthiness of the grade on the certificate?

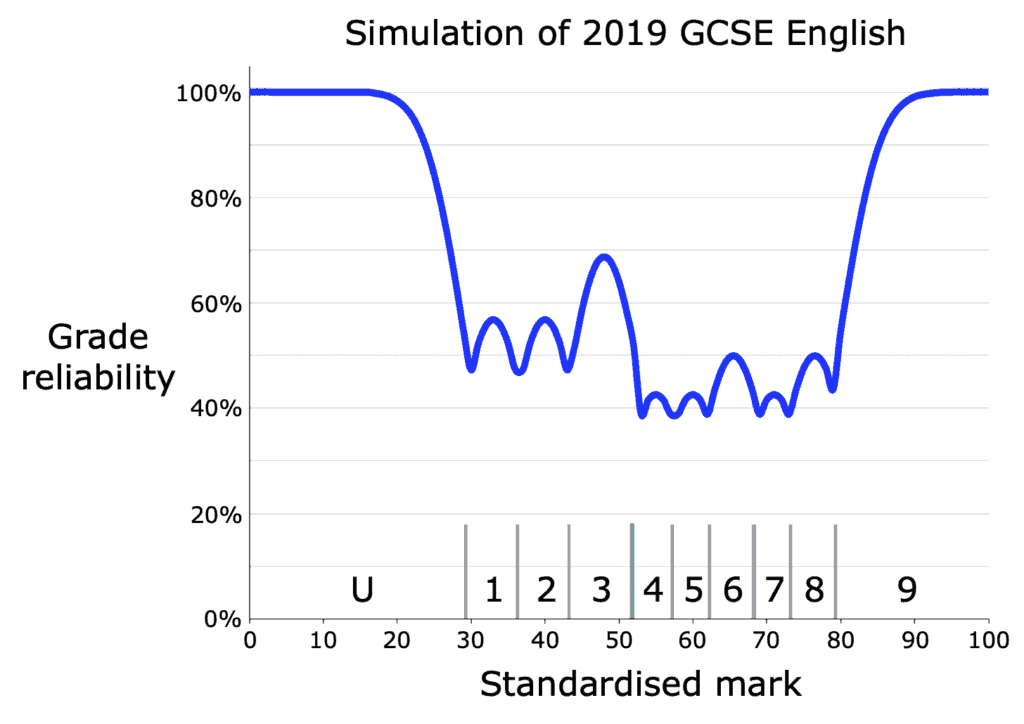

For GCSEs, because there are a greater number of necessarily narrower grades, matters are even worse, as exemplified by this chart showing the results of a simulation of the grades ‘awarded’ to the 707,059 students in England who sat 2019 GCSE English.

As can be seen, grades 4, 5, 6, 7 and 8 are less than 50% reliable, with grades 1, 2 and 3 being somewhat better – but even the 70% reliability reached by scripts marked at the middle of grade 3 is hardly an accolade.

Yes, grades for Maths and Physics are in general more reliable than those for Geography and English, but even these dip towards 50% at the grade boundaries. The overall message, however, is clear: the ‘gold standard’ of reliability isn’t quite as golden as one might have thought, let alone wished. And if the exam system can’t reliably distinguish between a grade C and a grade B, or a grade 5 and a grade 6, why should teachers be asked to make such spurious – and correspondingly unjustifiable – distinctions?

I don’t think they should. To do so implies a wisdom that I don’t think any human being can demonstrate. But the pressures to do so are strong – not just the comforting allure of the familiar but the pragmatic fact that UCAS ‘predictions’, and the corresponding admissions offers for the next academic year, are expressed in terms of conventional A level grades. Those grades, though, are only ‘reliable to one grade either way’, implying that a certificate showing AAB really means ‘any grades from A*A*A to BBC, but no one knows which’. What does that say about an offer of AAB, and the fairness of adhering to it?

For many years, we have all believed that there is a real difference between a grade B and a grade C, between a grade 5 and a grade 6. We now know this to be a myth. So despite the fact that this idea does not appear in Ofqual’s consultation, surely now is an ideal opportunity to bust the myth and to ‘support “innovation” in assessment’. By asking teachers to submit grades in, say, four bands – being especially careful about candidates close to the grade boundaries– and by inviting universities to solve the problem of how to reconcile their offers to this more realistic, and honest, assessment structure.

Comments

Aasia Shafiq Chaudhry says:

This is a good read because it wants to see the education/exam system(s) become fairer and to safeguard students, particularly those who are vulnerable.

In addition, there is also a need for overall improvement in the education system to improve the quality of education, especially in these schools and colleges where there are less resources being utilised, so that socio- economically disadvantaged students can get the benefit of what are called high value courses in universities.

Reply

Huy Duong says:

When schools were closed in March 2020, the 2020 GCSE and A-level cohorts had already covered most, or at least the majority, of the learning part of their courses. By June 2021, the 2021 GCSE and A-level cohorts will have suffered far more disruption to the learning part of their courses. As we all know, the effect of this disruption varies from student to student. It is difficult for teachers to grade their students in the best of circumstances, and it is even more difficult when they have to filter out the different effects on different students. It is not realistic to expect teachers to grade students to a 10-grade accuracy for GCSE (including U).

What is the point of a 10-grade scale when Ofqual has admitted that “The usual assurances of comparability between years, between individual students, between schools and colleges and between exam boards will not be possible”?

BTW, in 2020, Ofqual should have admitted that about the 2020 grades, with or without their flawed algorithm.

Reply

John Lawrence says:

Very interesting and I agree entirely that the whole grading system needs a proper review and not just the tinkering of the past. I do wonder what OfQual statisticians have to say about the statistical and practical issues around grade boundaries? These are of course issues that are just as relevant when exams are taken as the marking inaccuracies leave similar questions about levels of statistical confidence, which are especially relevant with narrow ranges of marks between grades.

I would also point out that this whole process is not about helping universities to make entry decisions to courses. Universities must be regarded as only one of many equally relevant destination opportunities for young people at the age of 18 and one would hope that the current pandemic has opened our eyes to the likelihood that universities may not be seen as the obvious destination in future and that there should be more relevant and wider opportunities for learning and development in the future.

That changed approach should lead to the insistance that both syllabuses and indeed exams focused much more on the needs of the customer ( the student)and answer with clarity how and indeed whether these qualifications are fit for purpose in preparing young people for a life of work in a rapidly changing world that increasingly allows ready access to information but will require real skills in interpretation, prediction, analysis, innovation, critical thinking and teamwork.

Now that mixture does seem to me to raise some wider and very serious questions about the whole industry of teaching and learning and its willingness and ability to change. It will require major re-skilling in most areas.

Unfortunately too many educational organisations are rooted in the past, are very slow to change and unfortunately too often demonstrate that narrow set interest is too high on the agenda.

Of course we also need politicians with some vision, ability and clout to deliver long term strategy rather than short term fixes.

Reply

Catherine Brioche says:

Something not mentioned – but something that I think is important – is the appeals process. It seems to me that asking teachers to submit conventional grades will lead to many appeals – why a B rather than an A? Given the evidence presented here, many grading decisions will be hard to justify, and if last year is anything to go by, this will result in a highly defensive appeals process: relevant information will not be disclosed, appeals will be disallowed on totally bureaucratic grounds, and much-vaunted “fairness” will be out of the window. Having fewer grades offers the benefit of fewer appeals and fewer disputes, especially if schools take great care to judge border-line students wisely.

My reference to last year is based on my own – and my son’s – bitter experience. My son’s school ‘played the game’ and submitted grades in line with their history, so my son’s CAG was an A, even though the school really believed he merited an A* – just like ‘poor Isaac’ (https://www.hepi.ac.uk/2020/05/18/two-and-a-half-cheers-for-ofquals-standardisation-model-just-so-long-as-schools-comply/). Many of last summer’s students will have been victims of that, but what is particularly upsetting is that my son’s cohort was just 5 students, so – as we now know – the ‘history’ rule did not apply. But the ‘small cohort’ policy did not emerge until long after the school had submitted its CAGs – had they known that small cohorts were a special case at that time, they would have submitted an A*. The guidelines schools were given were vague and incomplete, and were changed after-the-event.

The school has been very open and honest about this, supporting my appeal by admitting that they acted only to comply with their understanding of the guidelines, that in the absence of the historical constraint the CAG would have been an A*, and that the school had therefore made a ‘centre error’.

In their letter rejecting the appeal, the exam board wrote:

“A concern raised in the individual case was that the school was wrong to internally standardise grades by comparing them with what students had achieved in previous years and this could be grounds for appeal as a ‘centre error’… An additional concern raised as part of the appeal was that where there were small numbers of students (5 or fewer) there should be no internal standardisation of CAGs. This is incorrect. Although the family in this case is disappointed with the outcome of the appeals, we followed official guidance in the initial and independent reviews we have undertaken.”

No. The appeal did not claim that it was “wrong to internally standardise grades” and that “there should be no internal standardisation of CAGs for small cohorts”, for we and the school recognise that “internal standardisation” covers two concepts: honouring high standards (which is good) and constraining grades to history (which was in many cases unfair). The claim was that the rule for “internally standardising” grades for small cohorts was made clear only far too late, with the school feeling it was under pressure, with “internal standardisation to constrain” trumping “internal standardisation to maintain high standards”.

But the appeal has been rejected, with leaden bureaucracy crushing natural justice.

I fear there will be many further instances of this in summer 2021, especially if schools are forced to submit grades which – as this blog shows so vividly – are so unreliable and unjustifiable.

Reply

Huy Duong says:

Hi Catherine,

Did your son’s teacher give your son A* in the first instance, then the school downgrade it to A before submission? Or did the school’s process never include the A* from the teacher at any point, and only ever included the A from historical data?

Reply

Mark says:

Huy, Interestingly at the top of the JCQ results for Summer 2020 is a tiny caveat that reads “Summer 2020 results were issued under a different set of circumstances due to the COVID-19 pandemic and care must be taken when comparing to previous years”!

Reply

Huy Duong says:

Hi Mark,

Thank you, that’s really interesting. Yes, that caveat is in the right direction. Neither the 2020 results (either with or without the silly algorithm) nor the 2021 results are/will be comparable to the previous years’ results. This is true regardless of the accuracy or otherwise of previous years’ results.

Therefore it is strange that Ofqual follows the route that “the [2021] grades will be indistinguishable from grades issued by exam boards in other

years”. In any other area of life, passing two different things that are not comparable, eg, a 2019 grade B and a 2021 grade B, as indistinguishable might be viewed as fraud. At least, it is obfuscation. How will future employers know that the two grade Bs are not comparable unless the years are stated on the CVs or unless they ask the candidates?

One of the problems with Ofqual’s consultation this year is it doesn’t ask for people’s views on some important questions. This is the same problem with its 2020 consultation, and that problem further blinkered Oqual and the DfE on their way to last year’s disaster.

Reply

Catherine Brioche says:

Hi Huy

My son’s story was covered by Liz Lightfoot in the Guardian on Saturday 16th Jan. The original TAG was A* and his CAG was A. The school instigated it’s own CAG appeals (for small cohorts only) in which it admitted downgrading and fitting grades to historical data and prior performance data for its small cohorts. The Computer Science cohort was unusually smaller in 2020 and consisted of just 5 students, two of whom were expected to achieve A*. It had also switched exam boards to OCR, of which his teacher was a senior examiner and thus well placed to judge his students ability. My son achieved an A* in his coursework (worth 20% ) and every other grade had been A or A*. The school examiner also advised me that all their appeals for small cohorts had been rejected by all exam boards, except Edexel, including those instigated by other local grammar schools. Also worth noting my son’s school was so efficient at predicting the outcome of the Ofqual algorithm, he and his friends CAGs were an exact match. Hence the Uturn left their grades unchanged.

Reply

Huy Duong says:

Hi Catherine,

I have just realised that your son’s story is told in this Guardian article: https://www.theguardian.com/education/2021/jan/16/cruelly-cast-aside-a-level-victims-say-summer-debacle-must-never-happen-again.

I asked my previous question because it is possible that the grading process for your son might have violated GDPR, which would be illegal, and it might have violated the rule about grade moderation too: if there were never any teacher’s grade in the first place, it can’t be said that the school followed the correct procedure when it moderated the teacher’s grade, because you cannot moderate something that does not exist.

Reply

Huy Duong says:

Hi Catherine,

I’m sorry, I didn’t see your comments before I posted mine.

It is a very unfair situation. Ofqual couldn’t be bothered to undo the injustice that it has caused, and it seems that the exam boards won’t lift a finger unless Ofqual tells them to.

Reply

Huy Duong says:

… I have just had some thoughts about your son’s case.

Before last year’s U-turn, the rule for appeal was that if unrepresentative data has been used to moderate a grade then that constitutes wrong data used for grading. In your son’s case, clearly unrepresentative data was used to moderate his grade.

(BTW, I think for the school to moderate your son’s grade that way is to go against what it is supposed to teach GCSE and A-level maths and science students about statistics, it is a failure of critical thinking).

Presumably if wrong data is used for grading and if the teacher thinks that the moderated grade is wrong, then it should be fixed, especially as Ofqual has said that no one is is a better position to judge the student’s grade than the teacher.

If the principle “unrepresentative data has been used to moderate a grade then that constitutes wrong data used for grading” is a just principle, then it is just whether it is Ofqual that did the moderation or the school did, and it is just whether there was a U-turn or not.

Reply

Catherine Brioche says:

Hi Huy

Thankyou for your feedback and grateful to you for taking the time. I agree with the points you raised. Our first appeal was initiated by school and rejected. I then took the appeal to the second stage of the Independent Review ( £150). This appeal was on the basis of administrative error as the statistical model used by the school to moderate the TAG produced unreliable data ( CAG). It was once again rejected and OCR justified moderating CAGs to fit historical data for small cohorts in their response to Liz Lightfoot. Litigation or the ICO are our only remaining options. I believe Ofqual will continue to ensure all appeals are rejected. Nothing will change unless a litigation case wins on the basis of GDPR. I’m aware a number of parents are pursuing this.

My son is still one of the lucky ones in all this. One thing the article didn’t mention is he also studied throughout lockdown as he’d wanted to sit the Autumn exams. Like yourself I never trusted the process. Birmingham university however refused to hold or defer his offer based on Autumn exam results and gave him 5 days to accept an offer without the apprenticeship. The safety nets of 2020 all failed him.

The individual appeal process has been all encompassing and we’ve decided to take a break for now. Our focus is to try and prevent repeat mistakes in 2021 by exposing the outstanding unfairness and injustice of 2020.

Reply

Add comment